You open your monthly CX digest and skim the summary at the top. It says something like: “Customers continue to appreciate product quality while expressing concerns about pricing and subscription flexibility.”

You pause, because you’ve read that sentence before. Maybe last month. Maybe the month before that. The score hasn’t really moved, the themes look familiar, yet the reports keep arriving, telling what feels like the same story.

If you run an NPS, CSAT, or CES program, this probably sounds familiar. Over time, CX reports can start to feel repetitive, and it’s easy to assume the issue lies in the reporting itself.

But after analyzing thousands of real feedback campaigns, spanning NPS, CSAT, and CES – anonymized data from customer programs running on Retently – we found something different. In many cases, repetitive CX digests aren’t a reporting problem at all – they’re a feedback behavior pattern.

In this article, we’ll show how customer feedback tends to fall into four distinct behavioral archetypes, and how recognizing them changes the way CX insights should be analyzed and reported.

Key Takeaways

- Not all feedback campaigns behave the same way. Surveys differ widely depending on response volume, question scope, and the type of customers responding.

- Repetition in CX reports is often statistical, not analytical. In high-volume surveys, feedback distributions naturally stabilize, which means the same themes tend to appear month after month.

- Many feedback campaigns are inherently noisy or data-limited. Lower-volume surveys or broad prompts (like NPS) often produce shifting themes, while some campaigns simply don’t collect enough responses to support reliable analysis.

- The most effective CX reporting adapts to how feedback actually behaves. Once you recognize the pattern your campaign follows, the way you analyze trends and surface insights should change accordingly.

The Four Archetypes of Customer Feedback Campaigns

Most Voice of Customer (VoC) programs are built around a fairly simple but rarely questioned assumption: that feedback campaigns behave more or less the same way.

It doesn’t matter whether it’s a massive NPS program pulling in thousands of responses, a CSAT survey triggered after support interactions, or a smaller exit survey with only a handful of replies. The reporting rhythm usually looks familiar: same cadence, same format, same month-to-month comparison logic. At the center of it all is one simple question: “What changed compared to the previous period?”

And to be fair, sometimes that works really well. When feedback evolves gradually and the survey collects enough responses, period-to-period comparisons can surface useful shifts in customer sentiment or emerging issues. Other times it feels forced. And that’s because feedback doesn’t actually behave the same way across every campaign.

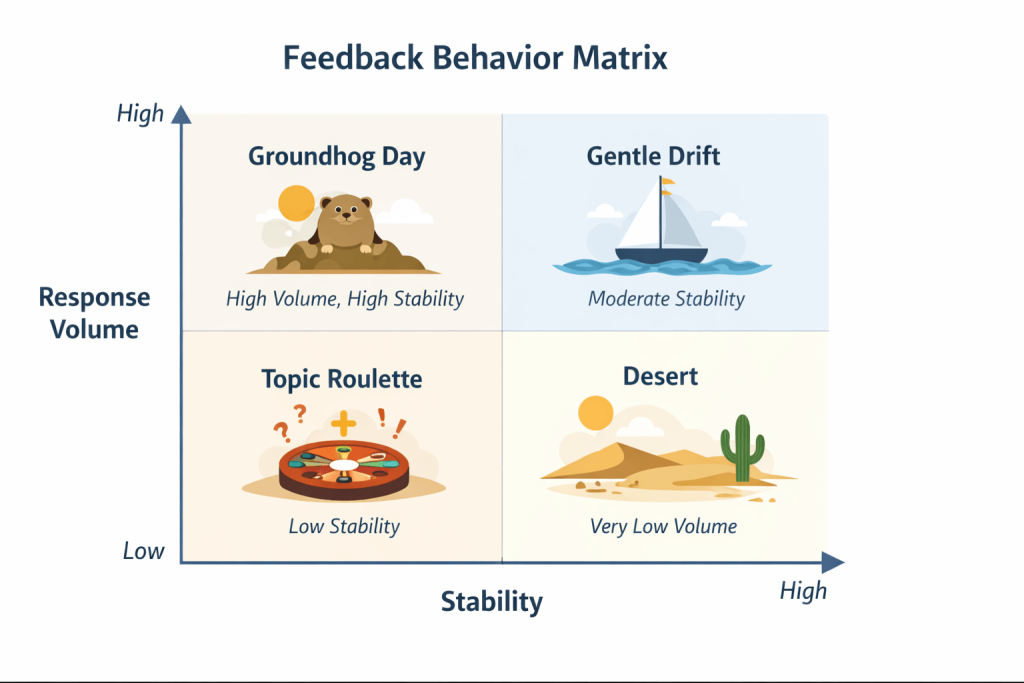

Despite differences in industry, product type, or customer base, most surveys followed one of four distinct dynamics. Some campaigns produce extremely stable signals. Others evolve gradually, revealing new themes as time passes. Some behave unpredictably, with topics shifting dramatically from one reporting period to the next. And some simply don’t generate enough data to support meaningful analysis.

Recognizing which archetype a campaign belongs to can completely change how its results should be interpreted. What looks like repetitive reporting in one context may actually reflect a healthy, stable system, while what looks like sudden change in another context may simply be the natural volatility of smaller datasets.

Let’s start with the most stable of these patterns.

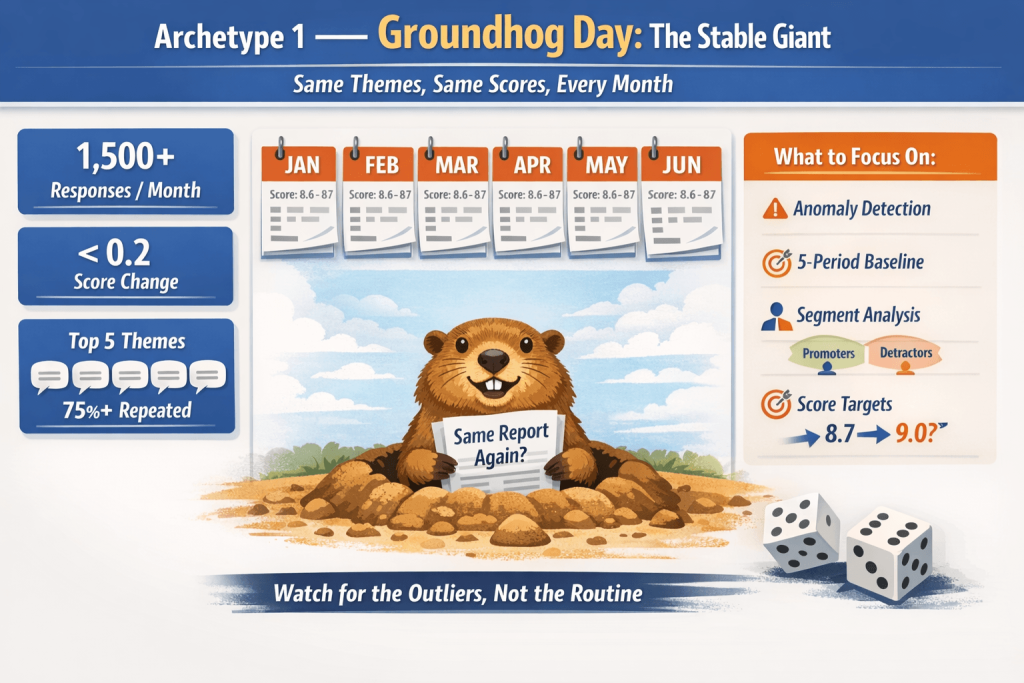

Archetype 1- Groundhog Day: The Stable Giant

The first archetype is the one most likely to produce the feeling that “nothing new ever happens in our CX reports.”

We call it Groundhog Day because the feedback patterns look almost identical from one reporting period to the next. The same themes dominate the comments, the score barely moves, and the overall narrative of the feedback remains remarkably stable. When the monthly digest arrives, the summary feels familiar, not because the analysis is lazy, but because the underlying story genuinely hasn’t changed.

Campaigns that fall into this category usually share several structural characteristics. They tend to collect a very large volume of responses, often more than 1,500 per month, and they typically measure mature products or services with a stable and well-established customer base. These surveys are frequently broad in scope – such as Net Promoter Score (NPS) programs or general satisfaction surveys – where customers can comment on many aspects of the experience.

Despite the large number of responses, the score itself tends to move very little from one reporting period to the next. Changes smaller than 0.2 points are common, and the top 5 themes are often identical more than 75% of the time across consecutive reports.

A healthcare platform we analyzed, collecting over 8,000 NPS responses per month, showed this pattern almost perfectly. Across six consecutive months, the same core themes – appointment scheduling, billing transparency, provider communication, and wait times – dominated the feedback, while the average score stayed within a narrow range. Each monthly digest told essentially the same story.

We saw a similar pattern in a subscription-based consumer brand collecting around 1,600 NPS responses each month. Across multiple reporting periods, the top themes remained remarkably consistent with theme overlap ranging from 76% to 88% and scores clustered tightly between 8.6 and 8.7.

This is exactly what you would expect statistically. When large samples are collected repeatedly from the same audience, feedback distributions naturally converge. In a sense, it is like rolling a fair die 2,000 times: the bigger the sample, the less surprising the result becomes. If the customer experience itself has not changed in a meaningful way, the feedback will reflect that stability.

However, this stability is not necessarily a problem or a sign that the reporting is failing. In many cases, it simply means the feedback system is accurately reflecting a stable customer experience.

The challenge appears when these stable campaigns are analyzed using the standard “what changed this month?” reporting logic. In highly stable datasets, meaningful changes are rare, so the comparison often produces summaries that feel repetitive or forced.

For Groundhog Day campaigns, the goal of CX reporting should shift away from searching for small month-to-month changes. Instead, the focus should move toward identifying meaningful deviations from the long-term pattern. Rather than asking what changed compared to last month, the more useful question becomes whether anything has changed enough to break the stability of the system.

In practice, that often means shifting from routine summaries to anomaly detection – flagging only the moments when the feedback meaningfully departs from its 5-period baseline.

That usually means focusing on signals such as:

- unusual spikes in negative themes

- emerging topics that were previously rare

- segment-level differences (for example, Promoters vs. Detractors)

- longer-term trends across quarters or seasons

- score-target analysis, for example: “You’re at 8.7, here are the three things still holding you back from 9.0.”

In other words, when feedback becomes statistically stable, CX reporting works best as an early warning system rather than a monthly narrative generator.

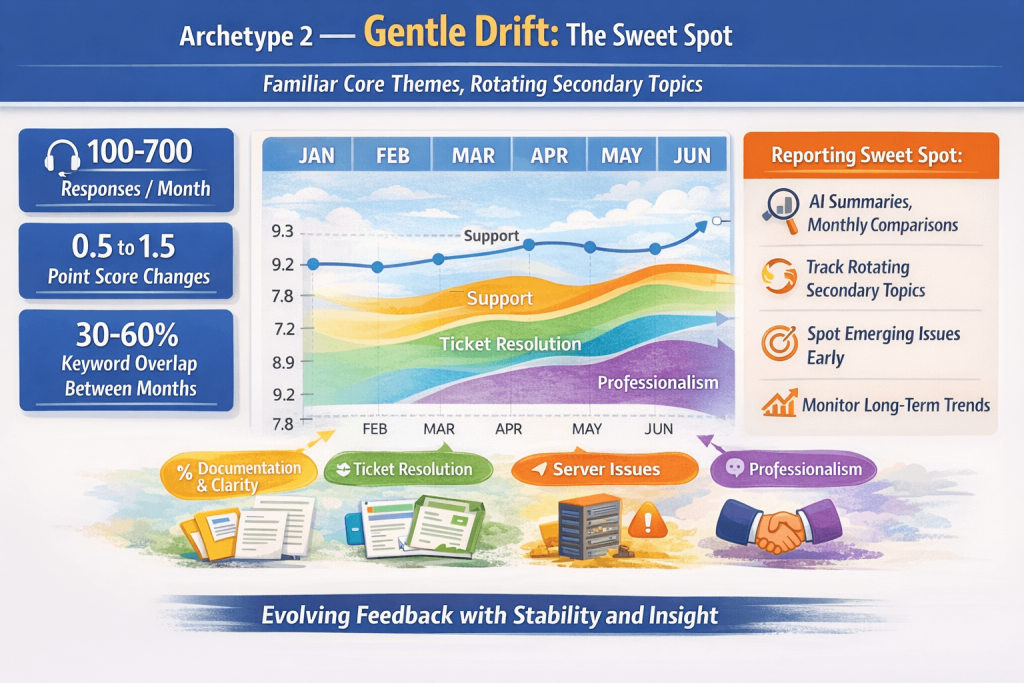

Archetype 2 – Gentle Drift: The Sweet Spot

If Groundhog Day represents the most stable form of feedback behavior, the second archetype sits right in the middle – and for many CX teams, it is the most useful pattern.

In these campaigns, feedback evolves gradually over time. The core themes that define the customer experience remain recognizable from one period to the next, while secondary topics appear, fade, and rotate as new issues or improvements emerge. Instead of producing the exact same report every month, the feedback tells a familiar story with small but meaningful variations.

This is what makes Gentle Drift the sweet spot. There is enough stability to anchor your understanding of the experience, but enough change to provide genuine news.

Campaigns that fall into this archetype tend to share several characteristics. They usually collect a medium-to-high volume of responses, often somewhere between 100 and 700 per month, which provides enough data to detect patterns without completely stabilizing the distribution. These surveys are frequently CSAT-based, especially when triggered after specific interactions such as support conversations, onboarding steps, or service requests. Because the question is tied to a defined moment in the customer journey, the feedback remains focused while still allowing room for variation.

In these campaigns, scores typically show moderate movement between periods – often in the range of 0.5 to 1.5 points – and the overlap between top themes tends to fall between 30% and 60%. Some themes persist, while others evolve gradually.

A good example comes from a B2B tech company’s support CSAT program. In that dataset, the same core themes appeared in almost every reporting period: support, fast response, and quick resolution. Those comments reflected the stable backbone of the experience and made it clear what customers consistently valued. But around that core, secondary themes rotated over time. In one period, customers highlighted professionalism and agent communication. In another, the emphasis shifted to ticket handling. Later, server issues and documentation clarity surfaced as new concerns. The central narrative remained consistent – customers appreciated responsive support – but each month still introduced a meaningful new angle.

A similar pattern was also spotted in a post-purchase CES survey from an ecommerce company collecting roughly 600 responses per month. Four core themes appeared consistently every month, while secondary themes rotated meaningfully. Shipping complaints spiked after a busy period, discount-related comments surged during a promotion, and a brief cluster of technical bug reports appeared and disappeared within a single month. The score itself remained extremely stable, moving only from 5.88 to 5.97 across the full period.

This is exactly why Gentle Drift works so well for traditional CX reporting. The stable core gives you a benchmark, while the rotating secondary themes reveal where attention may be needed next. If one of the core themes suddenly weakens, that is a strong signal. And if a peripheral topic keeps appearing more often, it may be the first sign of a larger issue emerging.

For CX teams, this is often the most informative environment. Period-to-period comparisons become genuinely useful because there is enough continuity to track the broader narrative, but also enough variation to surface new insights.

In practice, Gentle Drift campaigns benefit from reporting that focuses on identifying incremental change. Instead of looking for dramatic shifts, the analysis can highlight subtle movements in the feedback landscape, such as:

- new topics beginning to appear more frequently

- previously minor issues gradually gaining visibility

- small improvements in recurring pain points

- temporary spikes related to operational events

In this context, AI summaries and monthly comparisons tend to work particularly well. Because the feedback evolves slowly rather than remaining static or changing chaotically, each new report can add a small piece to the broader story of how the customer experience is developing over time.

For many CX programs, Gentle Drift represents the ideal analytical environment – stable enough to track, yet dynamic enough to keep generating meaningful insight.

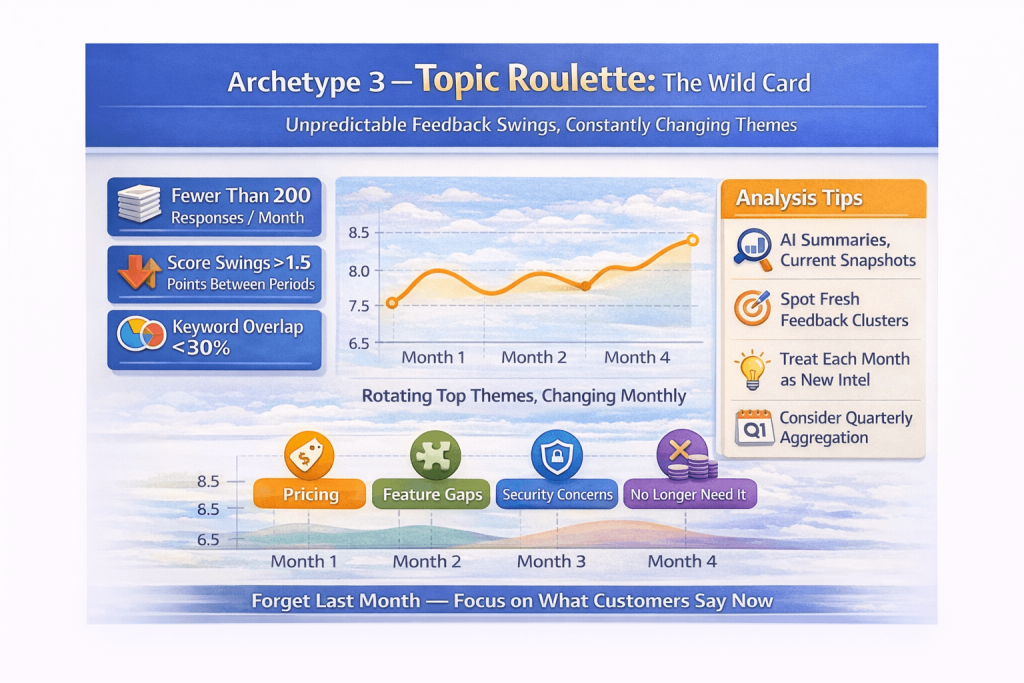

Archetype 3 – Topic Roulette: The Wild Card

The third archetype behaves very differently from the previous two. In these campaigns, feedback shifts dramatically from one reporting period to the next.

One month, the dominant topic might be pricing. The next month it could be missing features. The month after that, customers might focus on integrations, onboarding friction, or simply say they no longer need the product. When you compare reports side by side, the themes can look almost unrelated. Last month’s top issue may not even appear this month.

This is what makes Topic Roulette the wild card. Instead of telling a consistent story over time, the feedback keeps reshuffling. Trying to compare one period to the next can start to feel less like trend analysis and more like comparing two completely different datasets.

Campaigns in this archetype usually share a recognizable profile. They tend to collect lower response volumes – often fewer than 200 responses per period – and they are frequently tied to broad prompts, especially NPS questions or cancellation and exit surveys. Because respondents are free to comment on almost any aspect of their experience, the topic space stays wide, and the smaller sample size makes the rankings much more sensitive to short-term shifts.

In these campaigns, scores often swing by more than 1.5 points between periods, and keyword overlap between months can fall below 30%. In other words, both the score and the themes can move sharply from one report to the next.

A good example comes from a B2B SaaS company’s cancellation NPS survey, collecting roughly 150 to 190 responses per month. In one period, the dominant feedback centered on pricing. In the next, the conversation shifted toward security concerns. Later, server costs, feature gaps, and “don’t need it anymore” emerged as leading reasons for leaving. There was almost no continuity between periods. Each month introduced a different set of cancellation drivers.

This happens because smaller samples give each individual response much more weight. In a survey with 150 responses, a cluster of just five or six similar comments can completely reshape the keyword rankings. Add a broad question like NPS on top of that, and the result is exactly what you would expect: an open-ended prompt producing an open-ended set of issues, with a different mix rising to the top each time.

We saw an even more extreme version of this in a media company’s cancellation NPS survey collecting only around 45 responses per month. In one period, the leading themes were subscription management, cost, and content coverage – fairly balanced. A few months later, the cancellation process had emerged as a new top theme while content coverage had nearly disappeared. Each monthly report looked like it was describing a different product, even though the underlying experience hadn’t changed dramatically. Over the same stretch, the score moved from 5.00 to 6.04 – a full-point swing on a very small sample.

The trap appears when teams try to apply the same reporting logic used for more stable campaigns. If every report asks “What changed compared to last month?”, the answer may simply be: almost everything. A comparison will label some topics as “new,” others as “gone,” and still others as “resolved,” even when those shifts are mostly the natural volatility of a small dataset rather than a meaningful change in the customer experience.

For campaigns that behave like Topic Roulette, the most useful approach is to shift the focus away from trend comparisons and toward understanding what customers are saying right now.

Instead of trying to build a narrative across periods, the analysis should emphasize signals such as:

- current-period snapshots

- actionable clusters of similar issues

- fresh exit or cancellation reasons emerging in the latest feedback

- longer aggregation windows, such as quarterly reviews, to smooth out monthly noise

In practice, these surveys function less like trend systems and more like qualitative intelligence streams. Each reporting period provides a fresh snapshot of what a subset of customers is experiencing at that moment.

While this volatility can make reporting more challenging, it can also be valuable. Topic Roulette campaigns often surface new or unexpected signals that might not appear in more stable datasets. The key is recognizing that the data behaves differently and adjusting the analytical approach accordingly.

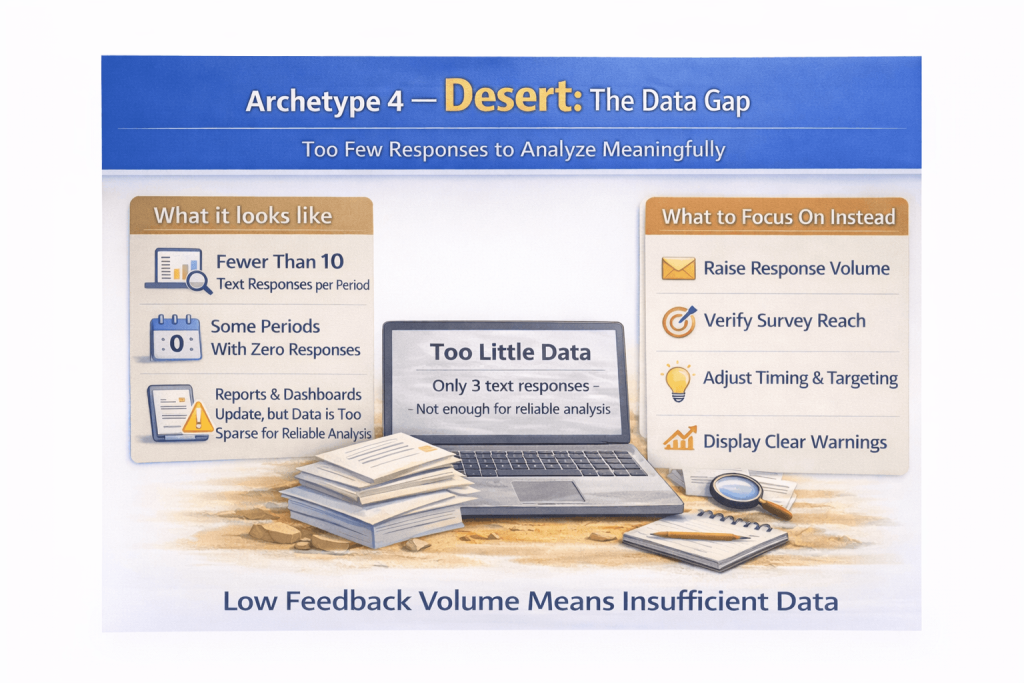

Archetype 4 – Desert: The Data Gap

The final archetype is the simplest and often the most overlooked. In these campaigns, the main issue isn’t stability or volatility. It’s simply a lack of usable data.

Some surveys simply do not collect enough responses to support meaningful thematic analysis. A campaign might receive only a handful of comments in a given period, or sometimes none at all. In these situations, trying to extract patterns from the feedback can quickly become misleading.

With very small samples, each individual response carries disproportionate weight. If three customers happen to mention the same issue in the same week, that topic might suddenly appear to dominate the feedback. In the next reporting period, a completely different set of responses might point in another direction.

The result is not insight, but noise.

Campaigns in this archetype often collect fewer than 10 text responses per reporting period and tend to show up in localized, niche, or highly segmented campaigns. This can include language-specific variants, seasonal campaigns that activate only occasionally, or newly launched surveys that have not yet gained traction. While the survey itself may still be useful, the data volume simply isn’t sufficient to support reliable narrative summaries.

A good example comes from a consumer brand’s Spanish-language NPS campaign, which averaged roughly four text responses per month. We saw a similar pattern in an automotive manufacturer’s program averaging roughly two responses per month. In both cases, the campaigns were active and technically functioning, but the response volume was simply too low to support meaningful thematic interpretation.

This is what makes Desert so dangerous. The limitation is not just that insight is weak, but that the output can become misleading. Modern analytics tools and AI systems will still try to generate confident-sounding summaries from whatever data they are given. With only three responses, a report might suggest that customer sentiment has suddenly collapsed because all three respondents were dissatisfied. In reality, that may simply mean you happened to hear from three unhappy customers that month.

For campaigns that fall into the Desert archetype, the most responsible approach is often to step back from thematic analysis altogether. Instead of forcing narrative summaries from tiny samples, teams should focus on whether the campaign is collecting enough usable feedback to support interpretation in the first place.

CX teams should focus on signals such as:

- whether response volume is increasing or declining

- whether the survey is reaching the intended audience

- whether the timing and distribution of the survey are appropriate

Numeric scores can still provide useful directional information, but narrative insights should be treated cautiously until the dataset becomes large enough to support reliable analysis.

In other words, when feedback volume is extremely low, the most responsible form of reporting may simply be acknowledging that there isn’t enough information yet to draw meaningful conclusions.

What the Data Actually Shows

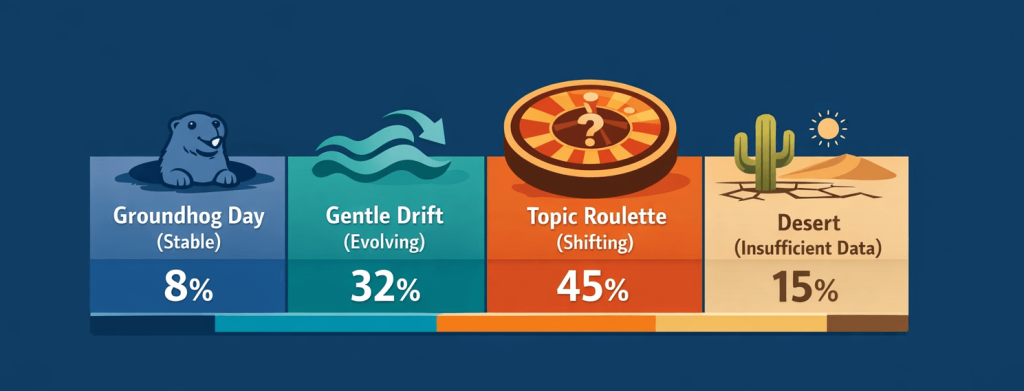

Once these archetypes became clear, the next step was to understand how common each one actually is.

Across the campaign transitions we analyzed, the distribution turned out to be surprisingly uneven. Only a minority of surveys behaved in the gradual, evolving way that most CX reporting assumes.

Instead, feedback dynamics were spread across the four archetypes as follows:

The most striking insight is that only about one-third of campaigns fall into the Gentle Drift category – the environment where traditional month-to-month comparison reporting works best. The remaining campaigns behave very differently.

High-volume surveys often fall into the Groundhog Day pattern, where feedback becomes statistically stable and meaningful changes are rare. At the other end of the spectrum, many lower-volume surveys behave more like Topic Roulette, where themes shift dramatically between reporting periods. And a non-trivial portion of campaigns simply don’t collect enough data to support reliable thematic analysis at all.

Taken together, this means that most CX reporting systems are optimized for the minority of campaigns that behave in the most predictable way.

In the next section, we’ll look at several findings that emerged from the data once these archetypes were taken into account.

Core Findings

Once we started analyzing campaigns through the lens of these archetypes, several patterns popped out that were not immediately obvious. Some of them even contradicted common assumptions about how customer feedback behaves.

1. Higher Volume Often Leads to More Repetition

Intuitively, many teams expect large feedback datasets to produce richer and more varied insights. In reality, the opposite often happens.

When a survey collects very high response volumes – for example, more than a thousand responses per period – the distribution of themes tends to stabilize. Each reporting cycle produces a sample that closely resembles the previous one, and the same strengths and pain points appear consistently.

This is not a flaw in the analysis; it is a normal statistical effect. Large samples tend to converge toward the underlying distribution of opinions within the customer base. As a result, feedback becomes predictable, and summaries may appear repetitive even when they are accurately describing the data.

2. CSAT Surveys Tend to Produce More Stable Themes Than NPS

Another pattern that emerged was the difference between survey types.

CSAT surveys typically ask about a specific interaction – for example, a support conversation or a service experience. Because the context is clearly defined, the range of possible topics is relatively narrow. Customers tend to comment on similar aspects of the experience, such as response time, helpfulness, or issue resolution.

NPS surveys, on the other hand, invite much broader feedback. When customers are asked how likely they are to recommend a product or service, their comments can touch on almost any part of the experience: pricing, features, onboarding, reliability, integrations, or support.

This wider scope naturally produces more diverse and volatile feedback patterns, particularly in lower-volume datasets.

3. The Most Valuable Insights Often Come From Disruptions

Across all archetypes, the most meaningful insights rarely came from small month-to-month variations. Instead, they appeared during moments when something in the customer experience actually changed.

Operational events – such as product launches, seasonal demand spikes, service outages, or promotional campaigns – often created clear shifts in the feedback landscape. These disruptions temporarily altered the usual pattern of themes and made new signals visible.

In other words, the most valuable insights tend to appear when the normal feedback pattern is interrupted. Recognizing when such shifts occur is often more important than tracking minor fluctuations between otherwise stable reporting periods.

4. Feedback Programs Often Move Through the Archetypes as They Mature

Another pattern that emerged was that feedback programs often shift between archetypes over time rather than staying in one mode.

Early on, surveys tend to sit in Desert, where low participation limits what can be learned. As volume builds, many move into Topic Roulette, where feedback becomes analyzable but remains volatile. With further growth, programs often reach Gentle Drift, where recurring themes begin to hold and comparisons become more meaningful. At very high volumes, some eventually settle into Groundhog Day, where theme distribution stabilizes and change becomes harder to detect.

This progression highlights how feedback systems naturally evolve as participation grows and datasets become more stable.

5. A Few Simple Signals Are Usually Enough to Diagnose the Pattern

In practice, most campaigns can be classified fairly quickly by looking at a few basic signals. You don’t need complex models or advanced analytics, just a simple assessment of how the feedback behaves over time.

Three indicators are usually enough to determine the archetype of a survey:

- Response volume – how many responses the campaign collects in each reporting period

- Theme overlap – how often the same topics appear from one period to the next

- Score volatility – how much the overall score changes between periods

Together, these signals provide a reliable picture of the feedback dynamics behind a campaign.

Surveys with very high response volumes, strong theme continuity, and minimal score movement usually fall into Groundhog Day. Campaigns with moderate volume and partial theme overlap tend to resemble Gentle Drift. Lower-volume surveys with minimal continuity typically behave like Topic Roulette. And surveys with only a handful of responses per period fall into Desert.

Adapting CX Reporting to the Archetype

Once a campaign’s feedback behavior becomes clear, the next step is adjusting the reporting approach to match it. One of the main reasons CX digests often feel repetitive or confusing is that the same reporting model is applied to every survey, regardless of how the feedback actually behaves.

But as we’ve seen, the dynamics behind each archetype are very different. What works well for one type of campaign may produce little value or even misleading insights in another.

For Groundhog Day campaigns, where feedback is highly stable, traditional month-to-month comparisons rarely reveal anything new. In these cases, reporting works better as an early warning system. Instead of summarizing the same themes every period, the analysis should focus on detecting meaningful deviations from the long-term pattern – unusual spikes in complaints, emerging issues, or shifts within specific customer segments.

In Gentle Drift campaigns, the standard reporting model tends to work well. Because the feedback evolves gradually, period-to-period comparisons can highlight small but meaningful changes in the customer experience. Here, monthly summaries and trend analysis provide valuable context for understanding how the narrative is developing over time.

For surveys that behave like Topic Roulette, the goal should shift away from comparing periods and toward understanding the current snapshot of feedback. Since themes change frequently, the most useful insights often come from identifying clusters of similar comments within the current dataset and surfacing issues that may require immediate attention.

Finally, Desert campaigns require a completely different focus. When response volume is extremely low, detailed thematic analysis can quickly become unreliable. In these cases, reporting should emphasize participation trends, score monitoring, and efforts to improve response volume rather than generating narrative summaries from a handful of comments.

Adapting the reporting model to the feedback archetype helps ensure that CX analysis remains both accurate and useful. Instead of forcing every survey into the same analytical template, the reporting process begins to reflect the actual behavior of the feedback system.

Modern CX tools can automatically adjust reporting to match these archetypes. For example, Retently’s AI feedback analysis detects response volume, theme stability, and score volatility across reporting periods, adapting summaries to highlight anomalies in stable datasets, emerging trends in evolving campaigns, or fresh insight clusters in more volatile feedback streams.

The Real Lesson Behind Repetitive CX Reports

Repetitive CX digests are often treated as a reporting failure. If the same themes keep appearing, the instinct is to assume the summaries are too generic or that the analysis is no longer adding value.

But repetition is not always a flaw. In many cases, it reflects a stable feedback system: the underlying customer experience has not changed in a meaningful way, so the dominant themes remain consistent. A familiar report can simply be an accurate one.

That shifts the role of CX reporting. Its job is not to manufacture novelty from one period to the next, but to make meaningful change easier to see when it actually happens. The real value of reporting lies less in constant summarization and more in helping teams distinguish between stability, noise, and genuine movement in the customer experience.

Once that becomes the goal, repetitive reports stop being a sign that something is broken. They become a signal in themselves – one that tells you the system may be stable, and that what matters most is knowing when that stability breaks.

Want to see how your own feedback campaigns behave? Start a free trial with Retently and uncover the patterns shaping your customer experience.

Methodology

This analysis is based on feedback data collected across production Voice of Customer programs spanning multiple industries, including B2B software, ecommerce, and subscription services. The dataset included several thousand survey campaigns using NPS, CSAT, and CES methodologies, each with multiple reporting periods.

To understand how feedback evolves over time, we analyzed month-to-month campaign transitions, measuring both score volatility and thematic continuity between consecutive periods. Theme continuity was estimated using keyword overlap between reporting periods, allowing us to observe how stable or volatile feedback topics remained over time.

Based on these signals – response volume, score movement, and theme overlap – campaigns were grouped into four behavioral archetypes: Groundhog Day, Gentle Drift, Topic Roulette, and Desert.

While the exact distribution varies across industries and survey designs, the behavioral patterns described in this study were consistent across a wide range of Voice of Customer programs.

Greg Raileanu

Greg Raileanu

Christina Sol

Christina Sol