Most CX studies ask what customers said. This one started with a more uncomfortable question: which responses were no longer there.

We’ve noticed that a non-trivial number of customer responses were being marked as deleted inside Retently accounts. That isn’t strange on its own – spam, test records, obvious duplicates all get removed by CX teams every day. What caught our attention was a hunch: were Detractors getting deleted at the same rate as Promoters? Or was something else going on?

To answer that, the analysis looked at more than 2.6 million NPS responses collected over six months across Retently accounts, using anonymized and aggregated data.

What we found is the kind of finding you don’t really want to publish, because it says something uncomfortable about the market we serve. But the alternative – sitting on it – would make us complicit in the problem. So here it is.

Key Takeaways

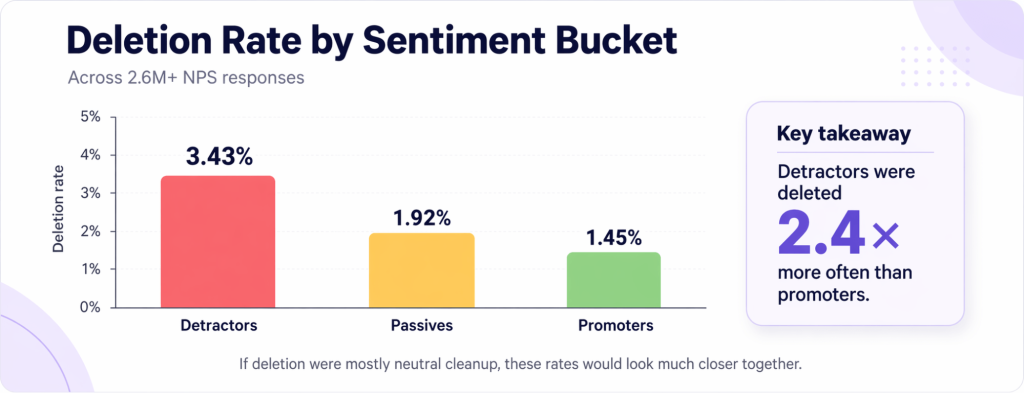

- Across more than 2.6 million NPS responses, Detractors were 2.4× more likely to be deleted than Promoters.

- 60% of deleted Detractors had written comments, which means many of the removed responses belonged to customers who took the time to explain a problem.

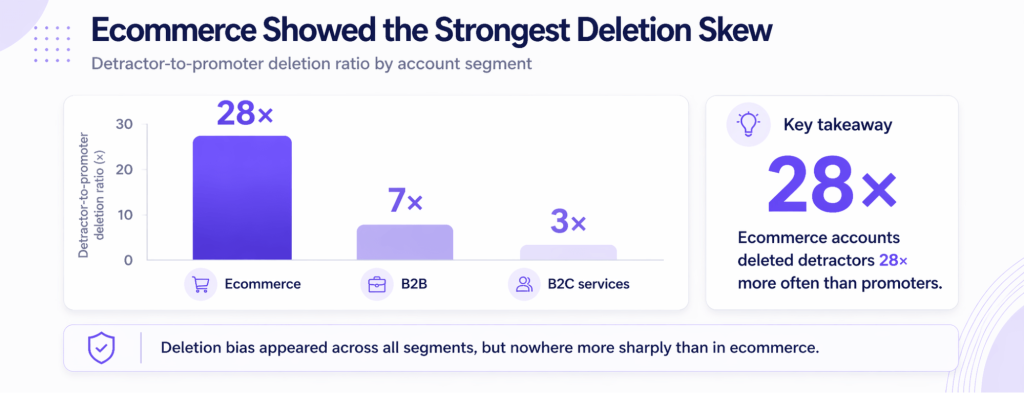

- The pattern was most extreme in Ecommerce, where Detractors were deleted at 28× the rate of Promoters, far above B2B (7×) and B2C services (3×).

- The deletion behavior was concentrated, not broad-based. A small number of accounts were responsible for most of the distortion.

- That makes deletion bias more than a data-quality issue. It suggests that, in some cases, NPS is being shaped not only by what customers say, but by what organizations choose to keep.

- The business risk is not just an inflated score. It is a dashboard that looks cleaner than reality, hides early warning signs, and makes bad decisions easier to justify.

What Deletion Bias Looks Like in a CX Dataset

Deletion bias happens when survey responses are removed from the dataset in a way that is not evenly distributed. In practice, that means some types of feedback are more likely to disappear than others, not because they are less valid, but because they are less welcome.

That is what makes this different from the usual response bias conversation. Response bias is about who answers the survey in the first place. Deletion bias happens later. The customer has already responded. The score has already been given. The comment may already be sitting in the system. The distortion enters when some of those records are more likely to be removed after the fact.

It is important to be clear here: deletion is not inherently wrong. A clean dataset still requires some cleaning. The problem begins when that cleanup stops being neutral.

And that is why deletion bias is so easy to miss. The final dashboard can still look polished, structured, and credible. The distortion is not always visible in the total number of deleted responses. It shows up in the pattern of what disappears.

What the Data Shows

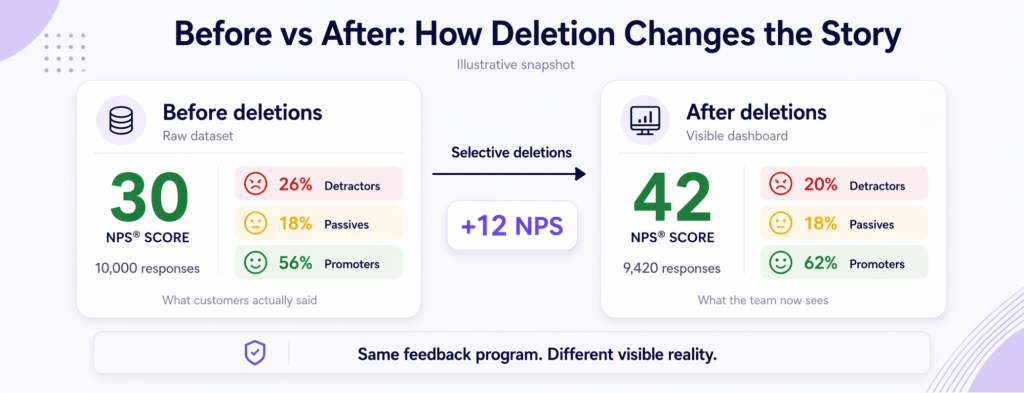

Across more than 2.6 million NPS responses collected across Retently accounts between late October 2025 and April 2026, 48,090 were marked as deleted. That is 1.85% of all responses. On its own, that number does not look especially dramatic. The real story appears when the same data is split by sentiment.

Detractors had a deletion rate of 3.43%. Passives came in at 1.92%. Promoters were deleted at 1.45%.That means Detractors were deleted at 2.4 times the rate of Promoters. That gap isn’t random noise and it matters much more than the overall deletion rate, because the overall number hides the asymmetry inside it.

If deletion were being driven mainly by routine cleanup, those rates should be roughly equal across sentiment buckets. Spam is not more likely to come from unhappy customers. Duplicates do not consistently skew negative. Test records do not naturally cluster around low scores. The only thing that correlates with dissatisfaction is the dissatisfaction itself. And somewhere in the workflow, that dissatisfaction is being quietly removed from the record.

That is the point where this stops looking like ordinary dataset maintenance and starts looking like a distortion in what the final score is allowed to represent. Because once Detractors are disproportionately deleted, the metric no longer reflects only the customer experience. It begins to reflect the organization’s tolerance for bad news.

The Part that Makes the Finding Worse

When we drilled into the deleted Detractors, we found something that shifted our framing of the problem entirely. 60% of deleted Detractors had written comments attached to their scores, compared to 53% of the Detractors that were kept.

That may sound like a small difference at first. Yet, a low score with no comment leaves limited context behind. A low score with a written explanation is something very different. It is a customer taking extra time to describe what went wrong, what frustrated them, or what nearly pushed them away. In other words, many of the Detractors being deleted were not low-value records. They were some of the most informative responses in the dataset.

That is what shifts the interpretation of this pattern. If the deleted responses were mostly empty, messy, or clearly invalid, the story would still sit closer to routine cleanup. But when a disproportionate share of deleted Detractors come with written comments, it becomes much harder to explain the pattern as harmless data hygiene.

These were customers who did more than give a bad score. They explained it. They gave specifics. They created the kind of customer feedback CX teams usually claim to want more of.

And that is why this part of the finding matters so much. The issue is not only that negative feedback was being deleted more often. It is that some of the feedback most likely to help a company understand and fix a problem was also more likely to disappear.

At that point, the pattern starts to look more like curation.

The Segment Pattern: Why Ecommerce Stands Out

The same pattern appeared across account segments, but not with the same intensity. When the data was split across Ecommerce, B2B, and B2C services, Ecommerce stood out immediately.

In Ecommerce accounts, Detractors were deleted at a rate of 3.70%, while Promoters were deleted at just 0.13%. That creates a 28× ratio and that number is not a typo. B2B accounts showed a smaller but still striking gap, with Detractors deleted at 1.64% versus 0.24% for Promoters, or about 7×. B2C services came in lower, with Detractors at 0.74% and Promoters at 0.24%, for a 3× ratio.

That does not mean every ecommerce brand is manipulating feedback, or that B2B and services are somehow clean by default. It means the pressure to make bad feedback disappear appears strongest where customer sentiment is closely tied to commercial performance and brand perception.

The reason isn’t hard to guess. In Ecommerce – particularly DTC – the NPS score is often just one input into a larger reputation economy: Trustpilot scores, Google Shopping ratings, Meta ad performance, influencer partnerships. A Detractor inside a CX tool can feel adjacent to a one-star review on a public platform, even when it isn’t public. The temptation to “clean up” before stakeholders see the dashboard is strongest exactly where public perception has the highest commercial stakes.

The B2B pattern is less extreme, but still meaningful. Enterprise teams face their own version of reporting pressure through QBRs, board decks, customer advisory boards, renewal conversations, and executive visibility. A 7× ratio suggests that negative feedback is not only difficult in fast-moving consumer environments. It can also become inconvenient in highly managed stakeholder environments.

B2C services – think property management, local service businesses, healthcare – look relatively healthier by comparison, but a 3× gap is still not neutral.

The broader takeaway is that deletion bias is not evenly distributed either. It appears strongest where commercial, reputational, or internal reporting pressure makes negative feedback hardest to tolerate.

The Concentration Problem

Just as important, the deletion behavior was not spread evenly across the platform. It was concentrated in a relatively small number of accounts.

Only 15 accounts were responsible for ten or more Detractor deletions during the six-month period. That matters because it changes the shape of the problem. This is not a story about every team making tiny judgment errors at the margins. It is a story about a smaller group of accounts introducing a disproportionate amount of the distortion.

In the most extreme anonymized case, one account deleted well over half of its Detractors while leaving essentially all of its Promoters in place. On paper, that account’s NPS view would look healthier than it should. Its trend line would appear cleaner. Its reported customer sentiment would be less volatile and less alarming than the underlying experience likely was.

That concentration cuts both ways. On one hand, it makes the pattern harder to dismiss as random noise, because a small number of accounts are producing behavior that is too directional to ignore. On the other hand, it also means the issue is not hopelessly diffuse. It is concentrated enough to be monitored, audited, and addressed.

And that is an important distinction. A problem spread evenly across every account would suggest a broad structural flaw in how teams handle feedback. A problem concentrated in a small subset of accounts points more clearly to governance, incentives, or local workflow habits that are allowing deletion bias to take hold.

So while the topline numbers show that Detractors were more likely to be deleted overall, the concentration pattern shows something else: most of the damage is being done by a minority of accounts with a particularly high tolerance for curating bad news.

Why This Matters to Whoever is Paying the Bill

If you’re a founder, a CEO, or the person who owns CX outcomes at your company, this is the part to read slowly.

You are paying for an NPS tool so you can hear what your customers think. The entire value of that purchase rests on one assumption: that the data you see on Monday morning is a faithful record of what your customers actually said.

When Detractors get deleted disproportionately, three things happen. None of them are good.

- You lose your early warning system. Detractors are often the leading indicator of churn. The customer who took the time to write a paragraph about why they’re frustrated is telling you, in plain language, what will cause them to leave. Delete that record, and you’ve converted a solvable retention problem into a future mystery churn.

- You silence the customers who cared enough to engage. A Detractor with a comment is not a hostile party. They’re a partner trying to help you. Deleting them isn’t just a data choice, it’s a message, even if the customer never sees it, that their effort was unwelcome.

- You end up trusting a dashboard that lies to you. Your NPS trend chart slopes upward. Your executive report looks clean. The number your board sees is flattering. And somewhere in your org, the reason for all of that is a CX process that treats bad news as a problem to be removed rather than a signal to be addressed.

The uncomfortable version of this is that a percentage of companies using NPS tools – not just ours – are probably operating on a corrupted picture of reality right now, and the only people who know are the ones doing the deleting.

That is what makes deletion bias more than a technical issue. It changes what the metric is for. Instead of acting as a warning system, a learning system, or a retention signal, NPS starts drifting toward something else: a comfort metric that reflects what the organization is willing to keep in view.

And for a founder, CEO, or CX leader, that is the real risk. Not just a slightly inflated score, but the false confidence that comes with believing the dashboard is telling the whole truth.

The Bigger Organizational Truth Behind the Data

Deletion bias is easy to describe as a data problem. In practice, it is usually something more revealing than that.

A dashboard does not become selectively cleaner by accident. When negative feedback disappears more often than positive feedback, that usually points to pressure somewhere in the system. Sometimes that pressure is commercial. Sometimes it is as simple as a team not wanting to escalate another uncomfortable issue before a review, a board update, or a stakeholder meeting.

That is why this pattern matters beyond methodology. It says something about how an organization handles bad news. In healthier feedback cultures, Detractors are treated as signals to investigate. In weaker ones, they start to feel like messes to manage.

That shift is subtle, but important. Once negative feedback is treated primarily as a reporting problem, the metric stops doing the job it was bought to do. Instead of helping a company learn faster, it starts helping it feel safer.

This is also what makes deletion bias different from a one-off data cleanup mistake. The more uneven the pattern becomes, the less it looks like random housekeeping and the more it starts to reflect internal incentives, tolerance for discomfort, and the unwritten rules around visibility.

In that sense, the issue is not only whether some responses were deleted. It is whether the organization has built a culture where bad feedback is allowed to stay visible long enough to matter.

And that is the harder truth sitting underneath the numbers. A distorted score is rarely just a distorted score. It is often a sign that the company has become more comfortable curating the signal than confronting what created it.

What To Do If This Makes You Uneasy

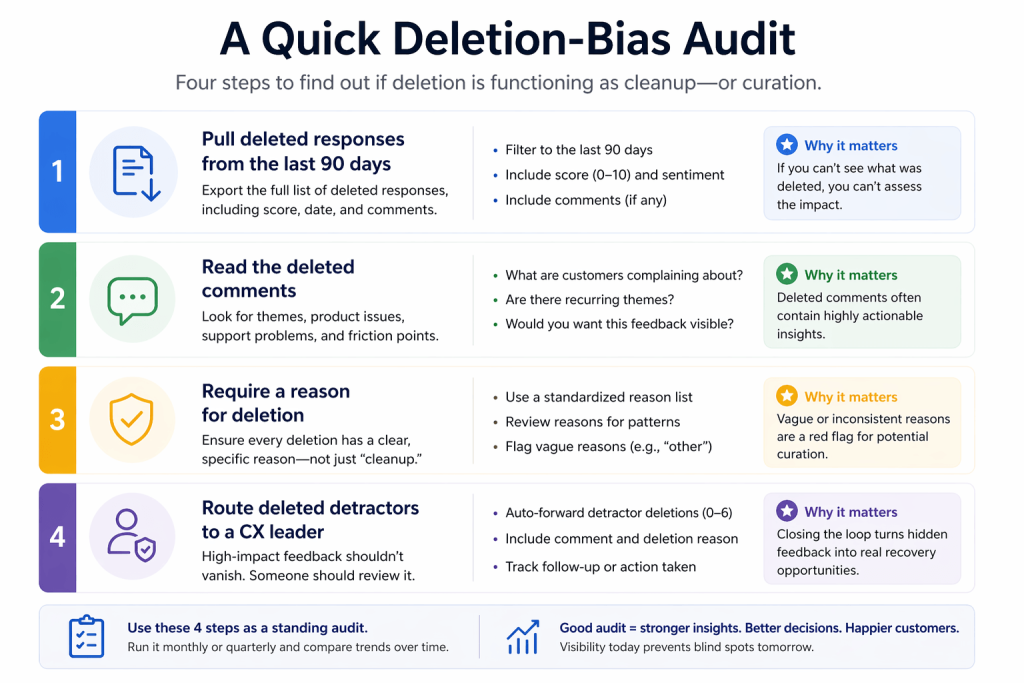

For companies reading this and wondering whether something similar could be happening in their own NPS program, the good news is that this is not hard to check. It does not require a major audit, a new strategy deck, or a long internal investigation. A few simple checks can reveal a lot very quickly.

The first step is to ask for every deleted response from the last 90 days. Not just the count. The full list, including the original score and any written comment. If the tool cannot produce that, that is already a useful finding. If it can, the pattern is often visible much faster than expected.

The second step is to read the deleted comments, all of them. This is the highest-leverage hour you’ll spend on CX this quarter. That may sound basic, but it is often more revealing than another month of dashboard tracking. Deleted Detractor comments can show exactly what kind of feedback is being removed and whether the issue is mostly harmless cleanup or something more serious.

The third step is to make deletion require a reason. Most CX tools allow deletion with one click. Change your internal rule: any deletion has to include a written reason in a shared log. Not because every deletion is suspicious, but because even a small amount of friction changes behavior. Once someone has to explain why a response is being removed, the difference between legitimate cleanup and quiet curation becomes much harder to blur.

The fourth step is to route deleted Detractors to a founder, CEO, or head of CX by default. Not to shame anyone, not as punishment, and not to create a surveillance culture. Simply as a governance checkpoint – to close the loop. If the deletion is legitimate, it will be obvious. If it is not, the company has a chance to recover the signal before it disappears into reporting.

What makes these steps valuable is that they are diagnostic before they are corrective. They help a company understand whether deletion is functioning as routine hygiene or whether it has quietly become part of how the metric is managed.

And that is the right place to start. Before debating the process, policy, or tool design, it is worth answering one simpler question first: what kind of feedback is actually being removed, and why?

What We’re Changing at Retently

This analysis did not just raise questions about customer behavior. It also forced a closer look at the product itself.

At the time of the analysis, Retently allowed account admins to delete responses, but those deletions were recorded too narrowly. Each was stored as a single boolean flag – no timestamp, no user attribution, no audit log, no event fired. A CX manager could delete a Detractor with a written comment and, from the perspective of the rest of the team, the record simply never existed.

That’s not good enough. It’s especially not good enough coming from a company that sells its customers on the promise of hearing what their customers really think.

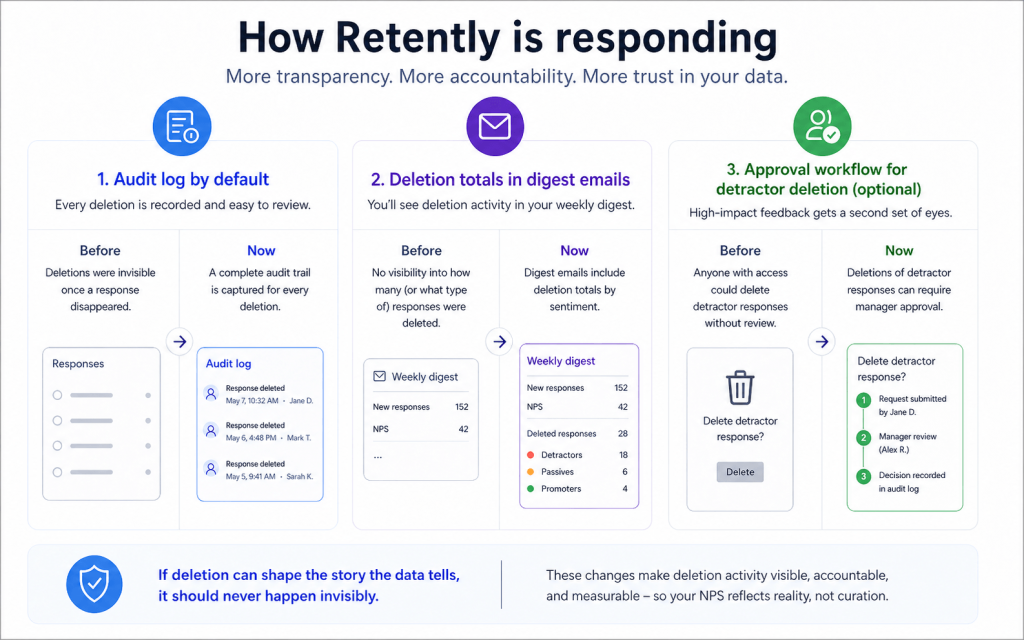

So we’re shipping three changes over the next two quarters.

- A full audit log, on by default, for every account. Every deletion – who did it, when, the original content – visible to account admins. This isn’t a premium feature. It’s a baseline trust feature, and it belongs in every workspace whether you asked for it or not.

- Aggregate deletion totals in your digest emails. When your Monday digest goes out, it will include a line like “14 responses were deleted this month – view audit log.” No names, no surveillance. Just a factual signal so the people who should be checking know it’s something to check.

- Optional approval workflows for Detractor deletion. For accounts that want a hard stop: an admin has to approve any Detractor deletion before it takes effect. Off by default. Available for teams that want governance built into the workflow, not bolted on after the damage is done.

The first two ship in Q2, while the approval workflow follows in Q3.

The broader point is straightforward. If a platform allows deletion, it also needs to make deletion visible. Otherwise, one of the most consequential actions a team can take on customer feedback remains one of the hardest to audit.

In that sense, these changes are not just feature updates. They are part of making sure the metric remains trustworthy enough to deserve the decisions built on top of it.

The Bottom Line

This is not a comfortable finding to publish. Some of the behavior described in this analysis belongs to real accounts on the platform, which makes the story harder to tell, not easier.

But that is also why it matters. Once a pattern like this becomes visible, staying quiet starts to look too close to accepting it.

The best CX teams do not delete Detractors because Detractors are often where the most useful truth lives. They are early warnings, explanations, and opportunities to fix something before it becomes churn, silence, or public damage.

That is the real divide this analysis points to. Not high-NPS teams versus low-NPS teams, but teams willing to face the full record versus teams tempted to manage the optics of it.

Because deleting a Detractor does not remove the underlying problem. It only removes one of the clearest chances to see it while there is still time to act. And for any company unsure which kind of feedback culture it has, there is a simple place to start: read the deleted comments.

Ready to trust what your dashboard tells you? Retently helps you collect, analyze, and act on customer feedback without losing what matters in the noise. Start your free trial or book a demo to see the audit log and governance controls in action.

Note: This study was based on aggregate, anonymized data from over 2.6M NPS responses collected across Retently accounts between October 2025 and April 2026. No individual customer or account is identifiable from this analysis. Raw data was not shared outside the internal research team. If you’re a Retently customer who would like an export of deletion activity within your own workspace, contact support – we’ll help you pull it.

Christina Sol

Christina Sol

Greg Raileanu

Greg Raileanu