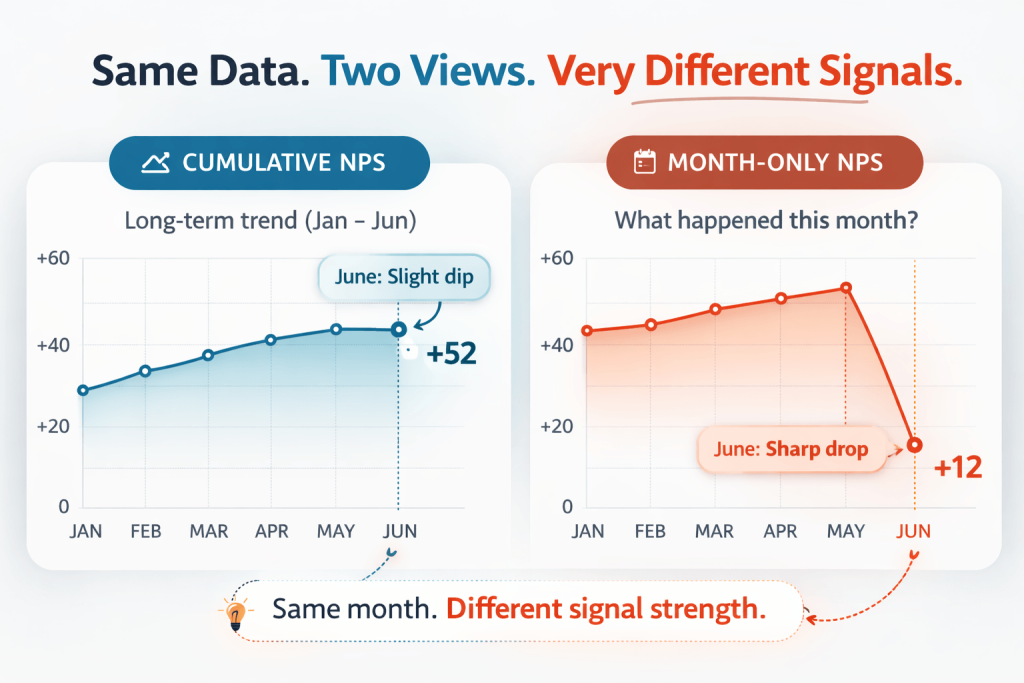

Most NPS charts look calm, even when customer experience is starting to shift. That is not always because things are going well. Sometimes, it is because the reporting view is better at preserving stability than highlighting change.

That matters because the same feedback can tell very different stories depending on how it is shown. One view can make a trend look steady and under control. Another can make a recent problem impossible to miss.

This is why the difference between cumulative and period-specific NPS matters. It is not just a reporting preference. It affects what teams notice, how quickly they react, and how easily emerging CX issues get dismissed.

Most CX tools have historically defaulted to cumulative reporting, and Retently was no exception until we introduced the month-only view in the reports.

In this article, we will look at what each view is actually showing, when cumulative reporting still makes sense, and when a period-specific lens gives a clearer read on what is happening right now.

Key Takeaways

- Cumulative and period-specific NPS do not just visualize the same data differently – they answer different questions. One is better for understanding the broader trend, while the other is better for spotting what changed in a specific period.

- Cumulative NPS is useful when the main risk is overreacting to noisy data. It works best in lower-volume, lower-frequency, or more executive-facing programs where stability matters more than immediate sensitivity.

- Period-specific NPS is more useful when the main risk is delayed detection. In high-volume, always-on programs, it helps teams catch experience shifts earlier and connect them to real operational events.

- The same chart movement can mean very different things depending on response volume. A drop backed by 12 responses and a drop backed by 1,200 responses should not be interpreted the same way.

- Use cumulative NPS for long-term monitoring and period-specific NPS for investigating where and when something changed.

These Two Views Do Not Just Display Data Differently – They Answer Different Questions

At first glance, cumulative and period-specific NPS may seem like two ways of visualizing the same feedback. Technically, they are. But in practice, they answer different questions, and that changes what teams take away from the chart.

Cumulative NPS combines responses across time. In practice, that means the score you see in month 6 includes responses from months 1 through 6. As new responses come in, they are added into a growing pool, which means stronger past months continue to shape the current picture.

Period-specific NPS works differently. Each month is treated as its own snapshot. June reflects June only. It is not supported by a strong April or softened by a strong February. That makes it easier to see what changed within a specific period.

This is the real distinction:

- Cumulative NPS asks: How healthy has the relationship looked across the selected period overall?

- Period-specific NPS asks: What happened in this exact period?

That difference matters because most teams are not just looking for a score. They are trying to answer a practical question. Sometimes they want to understand the broader direction of customer sentiment. Other times, they want to know whether something changed recently and whether that change deserves attention.

A simple example makes the difference obvious. Imagine a brand with a strong and stable NPS from January through May. Then June brings shipping delays, damaged parcels, and a spike in complaints.

In a cumulative view, the drop may look fairly mild because June is still being blended into five stronger months. In a period-specific view, the drop appears immediately because June stands on its own. Same feedback. Different interpretation.

That is why this is not just a display choice. The reporting view shapes what the data seems to be saying.

The Real Trade-off Is Not Accuracy vs. Inaccuracy – It Is Stability vs. Sensitivity

Once the difference between the two views is clear, the next question is usually which one is more accurate. But that is not really the right way to think about it. Neither view is wrong. They are designed to do different jobs.

Cumulative NPS is built for stability. Because each new set of responses is absorbed into a larger historical pool, the chart becomes less sensitive to normal month-to-month variation. That makes it useful when teams want a steadier trend line, especially in CX programs where response counts are low or uneven.

Period-specific NPS is built for sensitivity. Because each period stands on its own, changes in sentiment become visible faster. That makes it much more useful when teams need to spot issue onset, connect a dip to a specific event, or understand when something started to shift.

So the real trade-off is not accuracy versus inaccuracy. It is stability versus sensitivity.

Another way to think about it is this: cumulative NPS is better at preserving continuity, while period-specific NPS is better at surfacing movement.

A useful analogy here is photography. Cumulative NPS is like a long-exposure image. It creates a smoother picture, but sudden movement gets blurred. Period-specific NPS is more like frame-by-frame playback. It can look noisier, but it shows much more clearly when something has changed.

That is why the right choice depends on the risk your team is trying to avoid. If the bigger risk is overreacting to noise, cumulative NPS is often the safer lens. If the bigger risk is missing the first signs of a real CX problem, period-specific NPS is usually the better one.

Why Cumulative Became the Default and Why That Default Does Not Always Fit the Way NPS Is Used Today

Cumulative NPS became the default for a reason. It matched the way many CX programs were originally designed.

Early NPS programs were often relationship-based, lower-frequency, and leadership-facing. Surveys were sent less often, usually to a defined customer base, and the goal was to understand customer loyalty over time rather than track every short-term fluctuation.

In that setup, cumulative reporting made a lot of sense. It reduced noise, produced smoother trend lines, and gave teams a stable view of the broader direction. It also became the expected default, partly because it looked cleaner and was easier to present.

The challenge is that many CX programs today operate very differently. A growing number are now always on. Feedback is collected continuously, and NPS is often tied to specific touchpoints such as onboarding, support interactions, deliveries, product usage, or post-release experience.

That changes what teams need from the data. When feedback is arriving all the time, teams are no longer asking only, “How are we doing overall?” They are also asking, “What changed this month?” “Did that release affect sentiment?” “Did that operational issue show up in feedback?”

Those are not cumulative questions. They are period-specific ones.

And yet many dashboards still default to cumulative reporting because that is the model the industry inherited. In other words, teams often use a reporting lens built for a slower, more strategic version of NPS even when their actual use case has become much more operational and time-sensitive.

That does not make cumulative reporting wrong. But it does mean the default is not always the best fit for the job anymore.

Where Cumulative NPS Still Makes Sense

For all its limitations, cumulative NPS is still the better view in plenty of situations.

It works best when response volume is low or inconsistent. If only a small number of responses come in during a given month, a period-specific score can swing sharply based on just a few Promoters or Detractors. In those cases, the bigger risk is not missing a fast-moving issue. It is overreacting to noise that does not reflect a real shift in the broader customer base.

That is why cumulative reporting makes sense for lower-frequency relationship NPS programs, especially those running quarterly or biannually rather than continuously. When the goal is to understand customer sentiment across a longer stretch of time, a smoother view helps preserve perspective.

It is also a strong fit for long-cycle B2B environments, where relationships evolve more gradually and survey opportunities are limited. With a smaller customer base, each response carries more weight, so isolating every period can create a misleading sense of volatility.

The same logic applies in executive reporting. Leadership teams often need a directional view they can follow over time, not a chart that moves dramatically from month to month. When the main goal is to understand whether customer loyalty is broadly improving, holding steady, or weakening, cumulative NPS usually provides a clearer signal.

Typical examples include B2B SaaS companies with a limited customer base, enterprise vendors managing a smaller number of high-value accounts, consulting and professional services firms, and regulated sectors where survey collection is less continuous.

The key point is simple: cumulative NPS is most useful when the main risk is overreacting to noisy data, not missing fast-moving deterioration. When that is the challenge, a smoother view is not a weakness. It is a safeguard.

Where Period-Specific NPS Is the Better Operational Lens

If cumulative NPS is better at stabilizing the signal, period-specific NPS is better at showing when something new has happened.

This becomes especially useful when feedback is collected continuously and response volume is strong enough for each period to stand on its own. In that setup, the job of the chart is not just to show the broad direction of sentiment. It is to help teams detect change early and connect it to something real.

That is where period-specific NPS becomes much more actionable. It works especially well in always-on transactional CX programs, where feedback is tied to orders, onboarding steps, support interactions, deliveries, or other defined touchpoints. In those environments, a drop in one month may point to something concrete: a release that introduced friction, a fulfillment issue, a support backlog, a staffing change, or a seasonal spike that strained the experience.

This is what makes the view operationally valuable. It helps teams connect feedback to timing, and timing often determines whether a problem gets addressed early or allowed to spread.

That is why period-specific reporting is such a strong fit for ecommerce and DTC brands, marketplaces, subscription businesses, logistics-heavy operations, hospitality, healthcare journeys with frequent touchpoints, and SaaS companies with continuous onboarding or regular product release cycles.

In these environments, teams often need to answer questions like: Did sentiment dip after the rollout? Did the delivery issue show up in feedback? Did support quality weaken during peak season?

Those are not broad trend questions. They are timing questions. And that is why period-specific NPS becomes most valuable when the main risk is not noise, but latency.

In other words, the problem is not that the chart may fluctuate a little more. The problem is that by the time a cumulative trend visibly moves, the issue may already have spread into support load, reviews, churn risk, or team workload.

Period-specific NPS helps shorten that lag. It makes emerging changes visible while there is still time to investigate and respond.

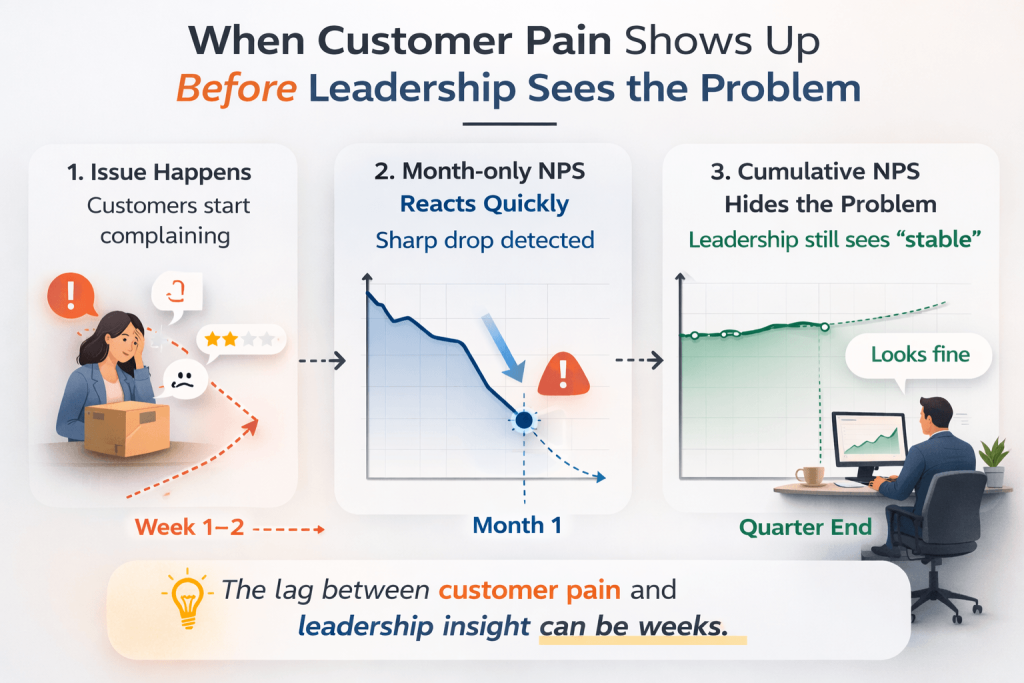

The Blind Spot Nobody Talks About: When the Wrong View Delays Attention

The real downside of the wrong reporting view is not analytical, but organizational. Imagine an ecommerce brand that has spent the last year delivering a strong post-purchase experience. Orders arrive on time, packaging is solid, support is responsive, and customers are generally happy. The cumulative NPS trend looks healthy and stable.

Then month 13 goes wrong. A warehouse issue begins affecting fulfillment. Orders ship late. Some parcels arrive damaged. Complaint themes start clustering around delivery delays and resolution speed. Customers feel the difference immediately, and frontline teams feel it too.

In a period-specific view, that shift appears quickly. In a cumulative view, it can look surprisingly small because the bad month is still being held up by the stronger ones that came before it.

That is where the blind spot appears. Customers are reacting now. Frontline teams are dealing with it now. But leadership looking only at the cumulative trend may still see the overall experience as broadly healthy.

That creates a lag between customer pain and organizational attention. And that lag is costly. By the time the cumulative chart moves enough to trigger concern, the problem may already have spread into support pressure, negative reviews, retention issues, and repeat purchase behavior.

The tricky part is that cumulative NPS is not hiding the problem because it is flawed. It is hiding it because it is doing exactly what it was designed to do: preserve the weight of the past.

That works well when the goal is long-term relationship tracking. But when teams need to see recent damage clearly, it can delay the moment when the organization recognizes that something needs attention.

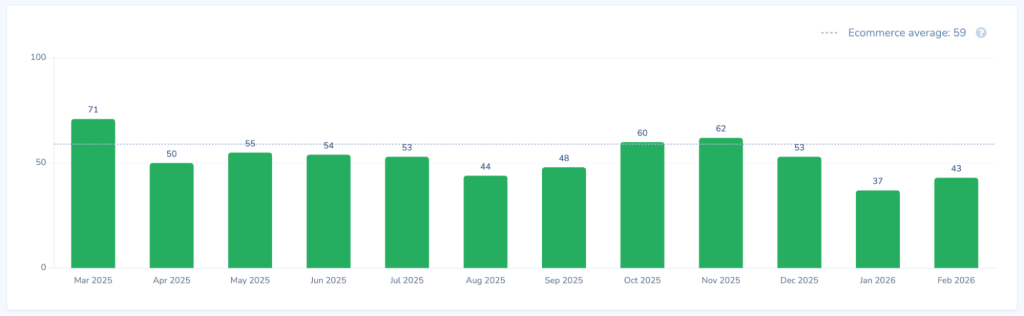

Sample Size Changes the Meaning of the Chart

This is where a lot of NPS interpretation goes wrong. Teams see a jump or a drop and immediately start explaining it. But before assigning meaning to any change, one question has to come first: how many responses are actually behind it?

Because the usefulness of either view depends heavily on response volume. If only 12 responses came in during a given month, one additional Detractor can materially change the score. In that case, a sharp drop in period-specific NPS may look dramatic without necessarily pointing to a broad CX issue. It may simply reflect normal small-sample volatility.

Now compare that with a month where 1,200 responses came in. If the score drops by the same margin there, the interpretation changes completely. At that scale, the movement is far less likely to be random noise and far more likely to reflect something operationally real.

So the same chart behavior can mean very different things depending on the sample behind it. That is why period-specific NPS should not automatically be treated as the smarter view just because it is more responsive. Sensitivity only helps if the signal is supported by enough data.

A good habit is to pause before reacting to any monthly change and ask a few basic questions:

- How many responses are behind it?

- Is this within the normal variance we typically see?

- Does it align with other signals such as support volume, delivery performance, churn comments, refund reasons, or complaint themes?

Those checks matter because charts should not be interpreted in isolation. A drop becomes much more meaningful when it appears alongside operational evidence pointing in the same direction.

This is also why the debate should never collapse into “monthly is better.” In high-volume CX programs, period-specific views can be extremely revealing. In low-volume ones, they can become overly dramatic. The reporting logic matters, but so does the amount of data holding it up.

A Better Reporting Model: One View for Monitoring, Another for Meaning

At this point, the goal should not be to declare one view the winner. That is usually where reporting discussions become too simplistic. A better model is to treat NPS as a two-layer reporting system.

The first layer is strategic monitoring. This is where cumulative NPS still does its best work. It helps answer questions like: Are we improving over time? Is relationship health directionally stronger than it was before? How does the selected period look overall? Those are monitoring questions. They are about direction, continuity, and the broader shape of customer sentiment.

The second layer is diagnosis. This is where period-specific NPS becomes much more useful. It helps answer questions like: What changed this month? Did the release, incident, staffing issue, or fulfillment delay show up in feedback? Which periods deserve a closer look? Those are meaning questions. They are about timing, causality, and investigation.

Seen this way, cumulative and period-specific reporting are not competing views. They are doing different jobs within the same reporting model. That is why the most mature teams do not choose one view forever. They separate monitoring from explanation.

They use cumulative reporting to maintain a stable view of long-term CX direction, and period-specific reporting to investigate movement, connect sentiment shifts to real events, and understand when something actually changed.

A Simple Decision Framework

If you are not sure which view should take the lead, the easiest place to start is the job the chart needs to do.

| Factor | Lean cumulative | Lean period-specific |

| Monthly response count | Low or inconsistent | High and stable |

| Survey cadence | Quarterly / biannual | Continuous / transactional |

| Main job of the report | Directional trend | Issue detection |

| Audience | Leadership / board | CX / Ops / Product |

| Need to connect score to specific events | Low | High |

| Tolerance for volatility | Low | Higher |

| Main risk | Overreacting to noise | Missing emerging problems |

This framework helps shift the decision away from preference and back to reporting purpose.

If the goal is stable directional tracking, cumulative NPS is usually the better fit. If the goal is issue detection and operational investigation, period-specific NPS becomes much more useful.

When in doubt, choose based on the question the team needs the chart to answer, not on which line looks cleaner.

Why the Comparison Is Fair: The Underlying Logic Stays the Same

Whenever teams compare two reporting views, a reasonable question comes up: are we looking at the same underlying data, or are the counting rules changing, too?

That matters, because if the respondent logic changes between views, the comparison becomes much less meaningful. A score might look different not because the time window changed, but because the system counted people differently.

That is not what is happening here. In Retently, the deduplication logic remains consistent across both views. Each mode uses the last response per person, so the underlying respondent handling does not change between cumulative and month-only reporting.

That means the difference between the two charts is not caused by one report counting people differently. It is caused by the reporting window itself.

So when the views tell different stories, that difference is meaningful. It reflects two different ways of reading the same underlying feedback, not a mismatch in how respondents are included.

How to Use This in Retently

If you want to explore the difference on your own CX data, go to Reports > NPS Monthly in Retently and switch between Cumulative and Month only. The reporting feature is not limited to NPS, but extends to any CX metric of interest.

The easiest way to use the feature is to keep the timeframe the same and compare the two views directly. That makes it much easier to spot months that look fairly harmless in the cumulative trend but stand out much more clearly in the month-only one.

In practice, the two modes serve different purposes. The cumulative view is better for telling the broader story of how customer sentiment has evolved across the selected period. The month-only view is better for reviewing individual periods, spotting sudden drops, and identifying where a deeper operational investigation may be needed.

Used together, they give teams two complementary lenses on the same feedback: one for executive storytelling, the other for operational review.

Conclusion

Cumulative reporting became the default for good reason. It creates smoother narratives and steadier trend lines, which can be genuinely useful for long-term relationship tracking and high-level reporting.

But smooth trends are not always what CX teams need. When the goal is to understand long-term customer health, cumulative NPS can provide the right perspective. When the goal is to catch deterioration while it is still recent, specific, and fixable, period-specific NPS is often more revealing.

That choice is not cosmetic. It affects what the organization sees, how quickly it reacts, and which problems stay visible long enough to get addressed. Because the most valuable CX chart is not the cleanest one, but the one that helps your team notice the right problem before it becomes harder to fix.

Switch between both views in your Retently dashboard and check whether your “stable trend” is actually hiding a very unstable month.

Greg Raileanu

Greg Raileanu

Christina Sol

Christina Sol