Survey response rates are dropping. Not randomly. And not for the reasons most teams assume. Some brands are still getting strong engagement. Others are watching their surveys fade into silence, even when timing, questions, and incentives look “right” on paper.

So what’s really changing? And in 2026, what does it actually take for a customer to stop and answer? That’s what this Retently study sets out to unpack.

This isn’t a theory piece or a list of generic tips. It’s based on large-scale data from millions of surveys sent by brands in 2025, drawn from our own aggregated dataset across ecommerce accounts, looking at how response rates behave across channels, question types, industries, and stages of the customer relationship.

The goal isn’t just to label rates as “high” or “low,” but to understand the patterns behind them: who still responds, when they do, and what makes the difference.

In short, the question shifts from “Why are response rates low?” to “When do customers still feel it’s worth answering and why?”

Key Takeaways

- Customer feedback is more important than ever. Attention is harder to win, but understanding sentiment is a strategic necessity, not an optional metric.

- Email and NPS still work when used with intent. They remain essential for scalable and relationship-level listening, but perform best when targeted and well-timed.

- Context boosts participation. In-flow and moment-based surveys complement email by capturing feedback when experiences are still fresh.

- Response rate reflects trust, not just tactics. Higher participation comes from stronger relationships, recognizable senders, and respectful frequency.

- Personalization is vertical-dependent, not universally positive. In emotionally driven categories, it can multiply response rates. In high-volume categories, it can signal automation and reduce engagement. Relevance wins, but only when it feels real.

- Increasing send volume can grow total responses, but beyond a certain point, the return per thousand messages drops. More surveys do not automatically mean more insight. When attention becomes the bottleneck, additional volume mostly creates fatigue, not signal.

- The shift is toward precision, not less listening. Smarter segmentation, timing, and channel mix keep survey programs effective without exhausting customers.

Retently Response Rate Study Data & Methodology

This study looks at what people actually did, not what they said they would do.

It’s based on survey activity from 600 ecommerce brands, covering over 25 million survey invitations sent between January and December 2025. All major feedback touchpoints used in ecommerce were included: email invitations, in-app prompts displayed during active usage, and direct-access survey links (for example, via QR codes, website widgets, or signature links).

To focus on real customer behavior, we excluded test data and imported historical surveys. What remains reflects live, production feedback programs and captures how customers chose to engage (or ignore) across an entire year, including seasonality and the high-pressure Q4 period.

Throughout the study, response rate is defined as the share of sent surveys that received at least one valid response:

Response Rate = (Surveys with a response) ÷ (Total surveys sent) × 100

We’re measuring participation, not opens or clicks.

Each was analyzed across multiple dimensions, including:

- Time (quarterly, to capture seasonality and volume effects)

- Channel (email, in-app, link)

- Survey type (NPS, CSAT, CES)

- Industry vertical (7 ecommerce categories)

- Customer lifecycle stage (first-time to repeat and high-value buyers)

- Personalization (use of dynamic variables)

- Sender identity and infrastructure (own vs shared domain, SMTP provider)

- Mailbox provider (Gmail, Outlook, Yahoo, Apple, etc.)

- Spam complaint signals (as a proxy for trust and reputation)

This structure lets us go far beyond a single “average response rate.” It shows how participation changes with context, relationship depth, technical delivery, and industry dynamics.

In short, the goal isn’t just to see how often customers respond but to understand when, why, and under what conditions answering still feels worth their time.

Core Empirical Findings from 2025

What follows are the key patterns that consistently appear across the dataset. Each section isolates one structural driver of participation and shows how it behaves under real operating conditions.

1. Volume vs Engagement

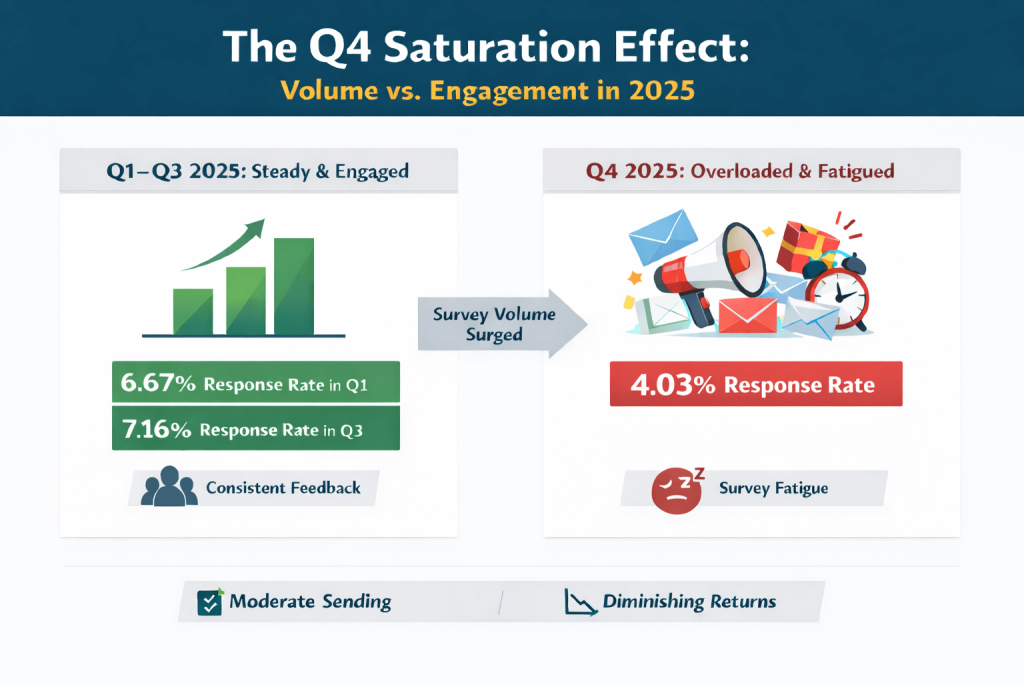

One of the clearest patterns in the data appears when we simply line up survey volume and response rates over the four quarters of 2025.

During Q1, Q2, and Q3, survey sending volume remained relatively stable, and so did engagement. Response rates hovered in a narrow and stable band, gradually moving from 6.67% in Q1 to 7.16% in Q3. In other words, when the pace of asking stayed moderate and predictable, customers were consistently willing to respond.

Then Q4 happened.

In the last quarter of the year, the number of surveys sent almost doubled. Campaigns intensified, transaction volumes surged, and many brands expanded their feedback programs to capture post-holiday sentiment. On paper, this looks like a logical move: more customers, more touchpoints, more opportunities to listen.

But the response rate tells a different story.

Despite the much larger audience, the share of customers who actually responded dropped to 4.03%, below the full-year average of 5.76%. In absolute numbers, more responses were collected than in previous quarters, but each new batch of surveys brought fewer voices than before. The return from every extra thousand emails or in-app prompts was clearly lower than before.

This is a classic case of diminishing returns:

- Up to a point, increasing volume expands coverage, meaning increased total feedback.

- Beyond that point, attention becomes the bottleneck. Additional volume mostly adds repetition and fatigue, not new insight. Customers receive multiple requests in a short time window, across many brands, alongside promotions, delivery updates, and account messages. Answering a survey simply drops in priority.

Seasonality intensifies this dynamic. Q4 is not just busier; it is more fragmented, more stressful, and far more competitive for attention. The data shows that even highly engaged ecommerce audiences become selective once asking intensity crosses a certain threshold.

The key insight here is not that Q4 is “bad for surveys”; it’s that response rate is elastic. It reacts to pressure. When the send volume increases faster than perceived relevance and trust, engagement does not scale linearly – it starts to slip.

For 2026, this has an important implication:

- Response rate cannot be treated as a static benchmark. It is a dynamic outcome shaped by how often, how widely, and at what moments customers are asked (in other words, by frequency, timing and competitive attention density).

- More listening does not automatically mean better listening, especially when everyone is asking at the same time. Programs that optimize for maximum coverage through volume alone risk collecting more data, but from a more tired and less representative slice of customers. Sustainable listening means knowing when more surveys stop adding signal and start adding noise.

In short, Q4 2025 demonstrates that in modern ecommerce environments, attention is the real limiting factor and response rate is one of the first places where that pressure shows up.

Takeaway: Q4 saw nearly double the survey volume but response rates dropped by 44%. The lesson? More isn’t always better. Customers experiencing survey fatigue during the holiday season are less likely to respond. Maintain consistent, moderate survey volume rather than aggressive Q4 campaigns. Quality over quantity wins.

2. Channel Effect: Context as the Dominant Driver

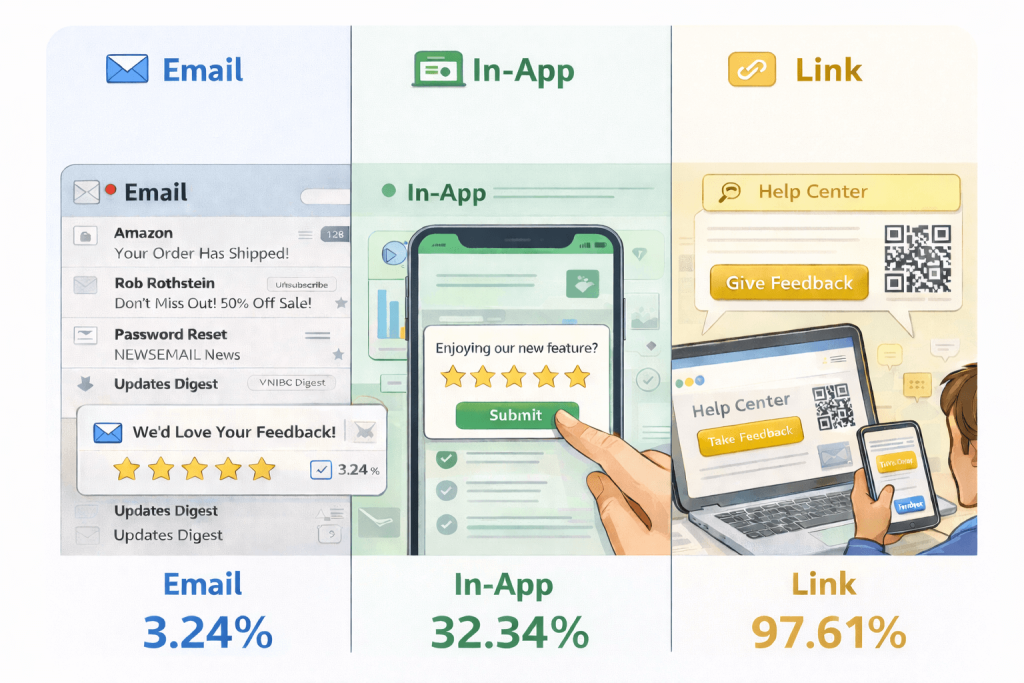

If volume tells us when attention collapses, channel tells us where it survives. When response rates are broken down by delivery channel, the differences are not small tweaks, they are in completely different leagues.

Email: Maximum Reach, Minimum Context

Email remains the dominant distribution channel by sheer volume. More than 95% of all surveys in the dataset were delivered through the inbox. But this reach comes at a cost: it’s a noisy, distracted environment.

With an average response rate of 3.24%, email surveys compete with promotions, delivery notifications, password resets, marketing campaigns, and countless other interruptions. The customer is typically:

- In a different task context than the experience being evaluated

- In a high-noise environment

- With no immediate emotional or functional reason to respond

Email excels at scale, but underperforms at engagement. At the same time, it remains the only channel capable of consistently reaching the full customer base, which makes it indispensable for longitudinal, relationship-level measurement (such as NPS), even if its response ceiling is lower.

In-App: Lower Reach, Higher Participation

In-app surveys tell a completely different story. Although they represent only a small fraction of total volume, they achieve an average response rate of 32.34%, roughly 10 times higher than email. This isn’t about better wording, but asking at the right moment.

In-app prompts appear:

- While the customer is actively engaged

- In the same environment as the experience being evaluated

- At a moment of task completion or friction

- Without channel-switching or inbox competition

The psychological cost of responding is minimal. The relevance is immediate. The sender’s identity is unambiguous. The result is a higher willingness to participate.

Link Surveys: Near-Perfect Completion, But Self-Selected

Link-based surveys yield response rates of nearly 100%, at 97.61%. At first glance, this looks like ideal performance. In reality, it reflects selection, not persuasion.

These surveys are accessed by users who:

- Actively choose to click a feedback button

- Scan a QR code

- Follow a help center or signature link

Once they arrive, they almost always complete. However, this channel does not address the participation problem; it filters for already motivated respondents. The data is high-intent, but not completely representative of the broader population.

Temporal Stress Test: How Channels Behave Under Q4 Pressure

The quarterly breakdown confirms that these channel differences are not cosmetic, accidental or seasonal anomalies. They persist, and in fact widen, under attention stress.

Email response rates decline steadily from 4.09% in Q1 to just 2.50% in Q4, showing strong sensitivity to volume pressure and inbox congestion during peak season. In-app surveys, by contrast, remain remarkably stable throughout the year, fluctuating in a narrow 30-35% band even during the high-traffic holiday period. Link-based surveys show virtually no seasonal variation at all, with completion rates staying above 97% in every quarter.

This pattern reinforces the underlying mechanism: inbox-based feedback is elastic to attention saturation, in-flow feedback is resilient to it, and self-initiated feedback reflects selection rather than persuasion.

The Reach vs Completion Trade-off

The three channels form a clear triangle. Email maximizes exposure but minimizes situational relevance. Link maximizes relevance but minimizes population coverage. In-app occupies the structural sweet spot: high relevance with controlled reach.

For 2026, the message isn’t “stop using email.” It’s don’t expect email alone to do all the listening. Programs that combine inbox reach with in-app context will consistently capture more, and more representative, feedback.

3. Survey Type Effect: Transactional Specificity vs Relational Abstraction

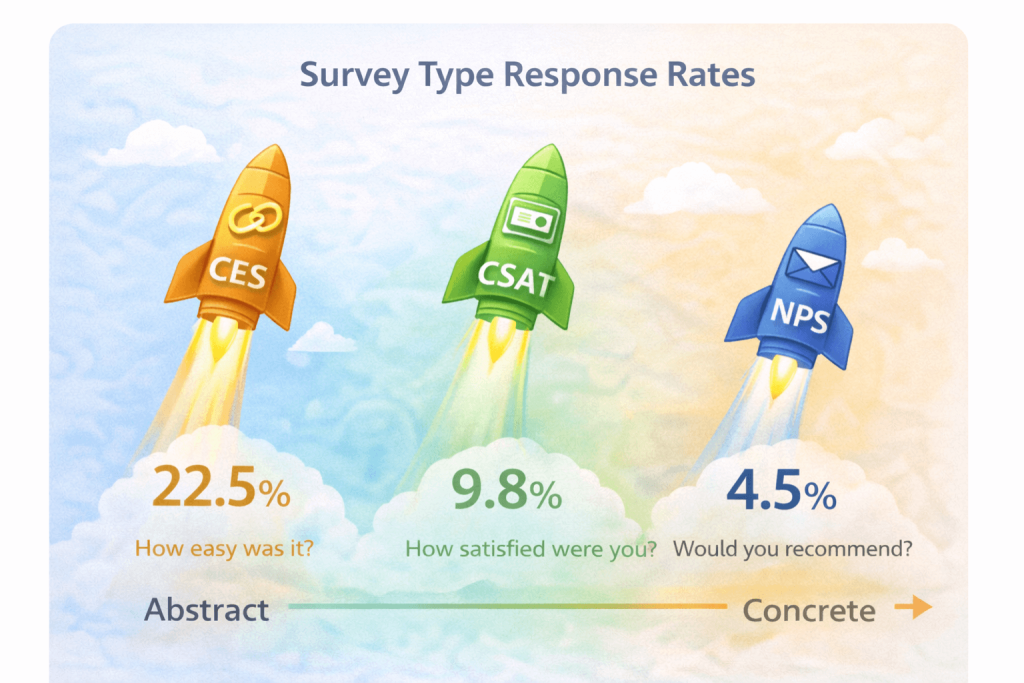

Beyond channel and volume, the type of question you ask has a big impact on whether people actually reply. In the 2025 dataset, the differences between NPS, CSAT, and CES reflect very different levels of mental and emotional effort.

The pattern is clear. Questions tied to a specific, recent experience get far more answers than questions that ask for a big-picture judgment.

CES leads by a wide margin (22.54%), CSAT follows (9.76%), and NPS trails at 4.5%. What’s interesting is that this isn’t about popularity; NPS is by far the most widely used metric in the dataset. It’s about how much thinking the question actually asks from the customer.

NPS asks for a step back: “How likely are you to recommend this brand?” To answer, people have to think across multiple interactions, weigh feelings and expectations, and make a statement about the relationship as a whole. That’s a heavier, more reflective task.

CSAT and CES, on the other hand, stay close to the moment. “How satisfied were you?” “How easy was it?” These are quick, concrete reactions to something that just happened. There’s no need to generalize, imagine, or make a social statement. You simply recall and respond. Saying “this was easy” or “this was frustrating” feels safe. Saying “I would recommend this brand” feels more like making a statement about yourself.

This difference becomes even clearer under pressure. NPS drops the most in Q4, when attention is most fragmented, falling to just 3.07%. CSAT remains stable and even improves slightly, while CES, although still the highest, softens as customers become more rushed. In short, when people are under time pressure, they still answer simple experience questions, but they postpone big-picture, reflective questions.

Repositioning Advocacy for 2026

The data does not say that advocacy is less important. It says that advocacy is harder to ask for.

NPS is a “big question”. It asks for reflection, trust, and a bit of emotional commitment. CSAT and CES are “small questions”. They ask for quick reactions to something that just happened.

That’s why CES gets five times the response rate of NPS, and CSAT more than double, without making NPS any less valuable as a strategic metric.

The implication for 2026 is not to abandon NPS, but to use it more intentionally. Treat it less like a background pulse and more like a high-trust check-in, sent when the relationship is warm, the experience is fresh, and the customer actually has the mental space to think about the brand as a whole.

Transactional questions (CSAT, CES) scale well for continuous listening. Advocacy questions (NPS) work best when they are timed, targeted, and framed as meaningful moments of dialogue, not just another automated ask.

4. Vertical Sensitivity: Industry-Specific Participation Patterns

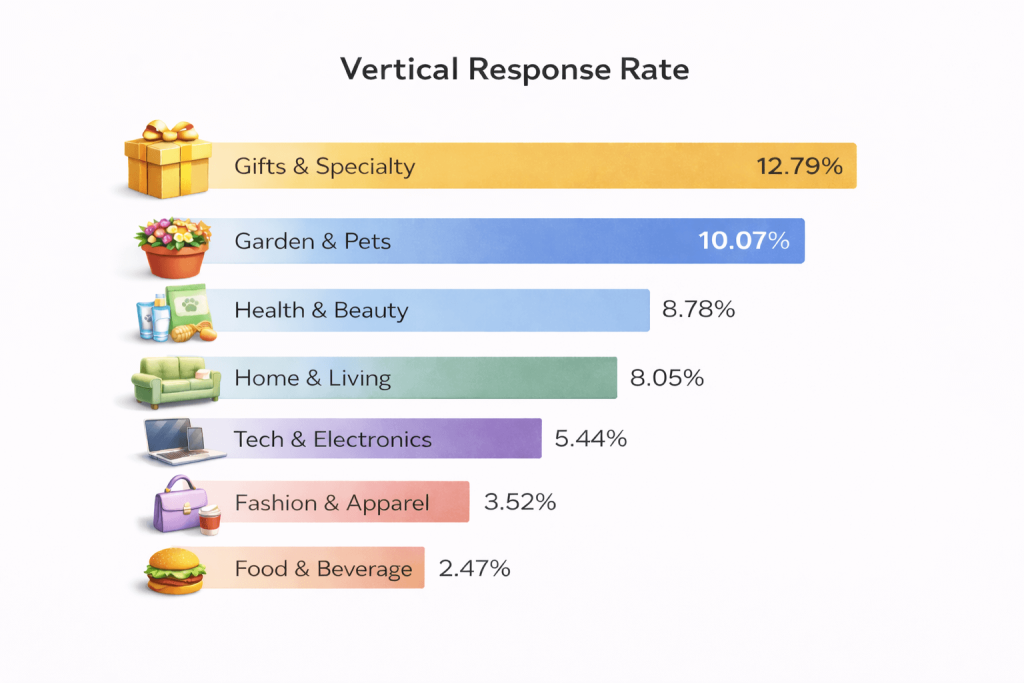

Response rate is not only a function of channel or survey design. It is also strongly shaped by the industry context in which the relationship exists. When the same feedback mechanisms are applied across different ecommerce verticals, participation varies by a factor of five.

At a glance, Gifts & Specialty and Garden & Pets are “high-participation” verticals, while Fashion & Apparel and Food & Beverage sit in a much lower engagement zone. That doesn’t mean those categories are “bad at surveying”, it means the baseline willingness to answer is structurally different by category.

Data shows vertical “personality,” not just rank. Gifts & Specialty* leads with 12.79% response rate. Garden & Pets shows consistently high engagement (10-12%) across all quarters. Fashion and Food are consistently low at 3.5 and 2.5 percent. Home & Living is the one that behaves like a high-response vertical until Q4 breaks.

Why Vertical Matters: Emotion, Frequency, and Cognitive Investment

Three factors help explain why response behavior looks so different across industries:

- Emotional involvement: Categories like Gifts, Health & Beauty, and Pets are often tied to care, identity, or relationships. Giving feedback feels more personal, so customers are more willing to engage.

- Purchase rhythm: High-frequency categories (Fashion, Food) generate many touchpoints but also more fatigue. Lower-frequency but emotionally loaded categories (Gifts, Home projects, Pet care) trigger fewer requests, but each one feels more meaningful.

- Decision weight: When a purchase involves more thinking or higher stakes (Home, Electronics), customers are more likely to reflect and articulate how it went. Routine purchases invite less reflection.

These forces create very different attention climates across verticals. A 3% response rate can be perfectly normal in Fashion, while the same number in Health & Beauty would signal a real drop in engagement.

But here’s the real upgrade: vertical doesn’t just change how much people respond, it changes what actually works.

What changes when we split by channel (vertical × channel)

Email response rates vary massively by vertical, even before you factor in copy, timing, or survey design. For example, Garden & Pets reaches ~10% on email, 4 times better than Fashion, while Fashion sits closer to ~2.3%. Health & Beauty shows strong email engagement at ~8.8%, while Food & Beverages sits at the other end of the scale with ~2.5%.

In-app is even more vertical-sensitive. Gifts & Specialty performs exceptionally in-app (~38%), Fashion does well (~28%), while Home & Living is surprisingly low (~6%). That tells us the “in-flow advantage” exists, but it isn’t evenly distributed across categories.

What changes when we split by survey type (vertical × survey type)

Survey type effects are not uniform across verticals. The clearest example is Health & Beauty: NPS is low at 1.18%, while CSAT is strong at 12.37%. That’s not a small difference; it’s a totally different listening strategy.

For example, Gifts & Specialty shines on CES with almost 38% response rate, while Garden & Pets responds extremely well to CSAT (20%). Fashion and Food show much lower engagement across the board, and are far more sensitive to fatigue and timing.

The Q4 Saturation Effect by Vertical

The Q4 collapse observed at the aggregate level is not evenly distributed.

A particularly strong example is Home & Living. For three quarters, Home & Living shows some of the highest engagement in the entire dataset, consistently above 16-18%. In Q4, as sending volume surged and promotional pressure intensified, the response rate dropped by nearly 80% at 3.57%.

This pattern shows that even highly engaged verticals are not immune to attention saturation. In categories where purchase cycles are longer and decision stakes are higher, customers are willing to provide feedback until the volume and competitive noise overwhelm the moment of reflection.

Other industries show the same pattern, just less dramatic. The holiday rush and message overload lower response rates everywhere, but each category feels that pressure differently.

Implications for 2026

All of this points to one simple idea: there is no single “good” response rate that applies to everyone. What looks strong in one vertical may be weak in another, and what works in one category can fall flat in the next.

Emotionally charged categories like Gifts or Pets can support more frequent feedback, as long as the timing feels right. High-frequency, low-involvement categories like Fashion or Food need a lighter touch and better-timed moments, or fatigue sets in quickly. Categories with longer, higher-stakes decisions, like Home & Living, are especially sensitive to seasonality and can see engagement drop sharply when Q4 noise peaks.

In short, participation is not only a customer trait; it is an industry-conditioned behavior.

5. The Personalization Paradox

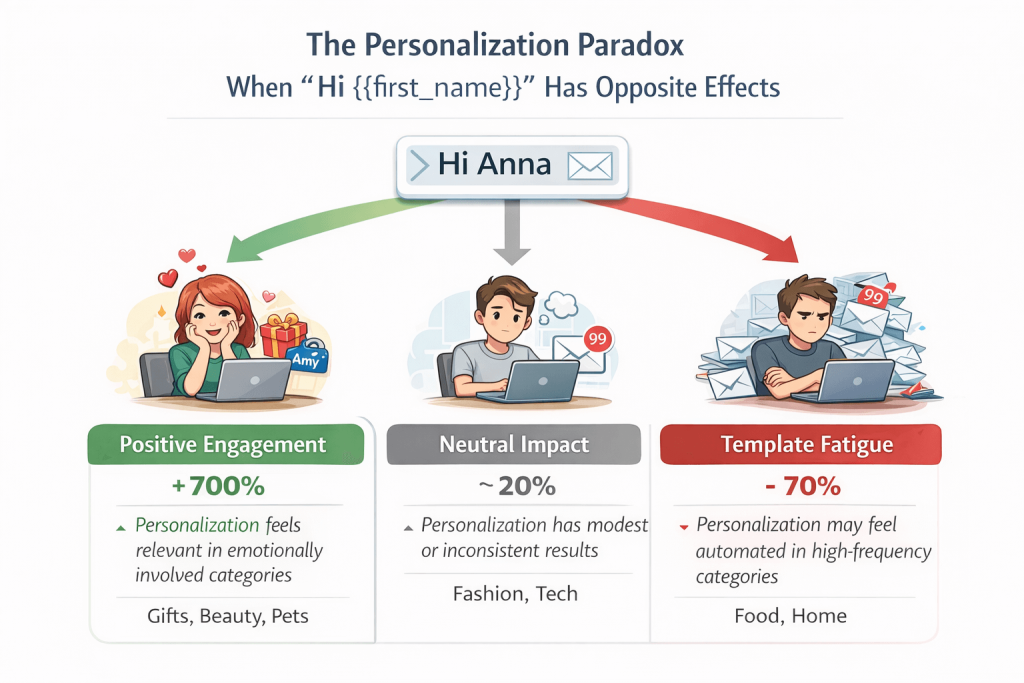

Personalization is often assumed to be a universal lever for higher engagement. The 2025 data show that this assumption is only partially true. At an aggregate level, personalization delivers a small positive effect. At a vertical level, however, its impact ranges from dramatically positive to clearly negative. In some categories, it reads as attention. In others, it reads as automation.

Aggregate Effect: A Modest Lift

The overall lift from non-personalized to personalized subject lines is around 6% (3.16% to 3.34%) – statistically real, but strategically modest. On its own, this would suggest that personalization is a nice-to-have optimization, not a game-changer. t’s the kind of improvement that helps at the margins, but won’t rescue a weak program or compensate for bad timing, over-sending, or poor deliverability.

But this average hides a far more interesting story. Because the “same” personalization tactic (often just a first-name token) behaves completely differently depending on what kind of category relationship the customer thinks they’re in.

How Personalization Behaves Over Time (Quarterly Pattern)

Two takeaways pop out here:

- Personalization “wins” in Q2-Q3 with 4.4-5%. vs 3% survey response rates (when attention is less congested and emails are more readable).

- In Q4, personalization loses (2.4% vs 2.7%), which fits our broader saturation story: once the inbox becomes crowded and promotional, “Hi {{first_name}}” can start feeling like just another automated message.

So even at the aggregate level, personalization is not stable, it’s seasonal and attention-sensitive.

Vertical Divergence: Three Personalization Regimes

When broken down by industry, personalization does not behave as a single effect. It splits into three distinct regimes.

1. Positive Personalization Regime (Strong Lift)

| Vertical | Personalized | Non-Personalized | Relative Lift |

| Gifts & Specialty | 25.78% | 2.98% | +765% |

| Health & Beauty | 12.45% | 1.43% | +771% |

| Garden & Pets | 12.11% | 4.81% | +152% |

In these categories, personalization acts as a relevance amplifier. Purchases are emotionally loaded (gifts, self-care, pets), and seeing one’s name or a familiar reference signals that the message is meant for me, not “customers in general.” Personalization restores a sense of individual recognition and trust, sharply increasing willingness to engage.

It’s also worth noting how extreme the gap is: in Gifts & Specialty, personalization doesn’t “optimize” response, it completely changes whether people treat the message as worth opening mentally.

2. Neutral Personalization Regime (Marginal Impact)

| Vertical | Personalized | Non-Personalized | Relative Lift |

| Fashion & Apparel | 2.68% | 2.20% | +22% |

| Tech & Electronics | 4.95% | n/a | — |

Here, personalization helps slightly or inconsistently. The product relationship is more functional or trend-driven, and the customer’s identity is less tightly bound to the brand interaction. A first name token neither strongly increases nor decreases perceived relevance.

This is an important “permission” takeaway: if you’re in these categories, you don’t need to obsess over first-name personalization. Your bigger wins are likely timing, lifecycle targeting, and channel mix.

3. Negative Personalization Regime (Backfire Effect)

| Vertical | Personalized | Non-Personalized | Relative Impact |

| Food & Beverage | 1.83% | 5.72% | – 68% |

| Home & Living | 1.20% | 5.94% | – 80% |

In these verticals, generic first-name personalization is associated with lower response rates. The likely mechanism is not a dislike of being addressed by name, but rather template fatigue and a loss of credibility. When a high-volume, promotion-heavy category uses generic personalization, the message may feel more automated, more “marketing-like,” and therefore less worthy of attention than a clean, brand-centric subject line.

Interpreting the Paradox: Relevance Signaling vs Template Fatigue

The data suggest that personalization works through two opposing psychological mechanisms:

- Relevance signaling: In emotionally involved categories, personalization communicates care, recognition, and individual relevance. It reduces the perceived distance between brand and customer, increasing the likelihood of response.

- Template fatigue: In high-volume, low-involvement categories, the same personalization tokens might signal automation rather than attention. Customers recognize the pattern, downgrade the message’s importance, and disengage.

Which mechanism dominates depends on the meaning of the relationship, not the technology of personalization itself.

Seen through this lens, the vertical results become much easier to interpret:

- Gifts & Specialty and Health & Beauty see an almost 8× lift from personalization (25.78% vs ~3%, and 12.45% vs ~1.4%), meaning a name can be the difference between being ignored and being taken seriously.

- Garden & Pets shows a strong but more moderate effect (about 2.5×), confirming that emotional relevance helps, but doesn’t fully override context.

- Food & Beverage and Home & Living perform worse with personalization (-68% and -80%), a clear signal of template fatigue in high-volume, promotion-heavy categories.

- In these verticals, simple, brand-led subject lines outperform “Hi {{first_name}}”, suggesting that credibility beats familiarity.

Implications for 2026

Personalization is not a universal accelerator. It is a context-sensitive trust cue.

For 2026, the evidence points to a shift from “token personalization” to situational relevance:

- In emotionally charged verticals, deeper personalization (moment-based, product-specific, relationship-aware) is likely to remain a powerful participation driver. In those categories, the next step isn’t just using a name, it’s referencing something real: the order, the moment, the experience (“Quick question about your recent order…”) so the email feels like a real follow-up, not a template.

- In commoditized or high-frequency categories, restraint may outperform customization. Clear, credible, brand-anchored messaging may feel more trustworthy than artificial familiarity. In these categories, “clean and credible” often beats “friendly and personalized.” Even a simple subject line that sounds specific and purposeful can outperform a name token.

The key lesson is that personalization does not increase response rates by default. It increases them only when it authenticates relevance. When it merely exposes automation, it accelerates disengagement.

So personalization isn’t a simple lever, it’s a test. If it makes your email feel more human and more specific, it helps. If it makes your email feel more templated, it hurts.

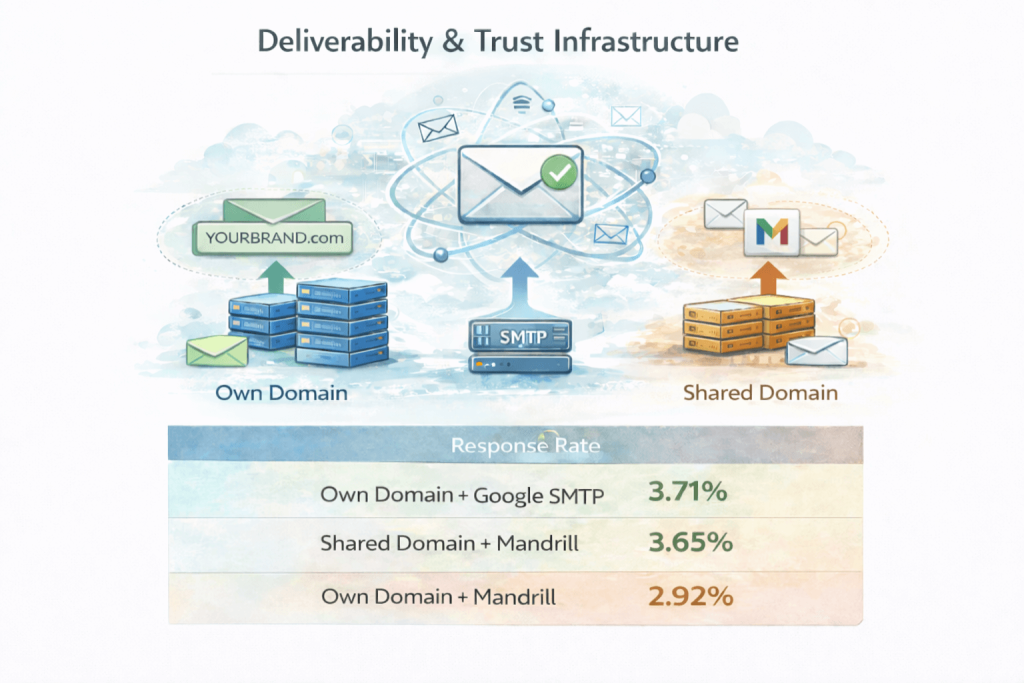

6. Deliverability & Trust Infrastructure

Behind every response rate sits a more basic question: did the message arrive, and did it look trustworthy enough to deserve attention? The 2025 data shows that technical delivery and sender identity are not background variables. They are first-order drivers of participation.

Own Domain vs Shared Domain: Trust as a Participation Multiplier

Across the full year, Retently’s shared domain actually performs slightly better than the own branded domain with a 3.66% vs 3.12% response rate. This does not invalidate the role of domain identity as a trust cue. Rather, it shows that credibility in practice depends on how well the sending environment is maintained. A properly authenticated, reputation-managed shared domain can perform as well as – or better than – an own domain if transport stability, complaint control, and sending discipline are strong.

Domain identity signals recognition, but recognition alone is not sufficient. Participation is shaped by the combined integrity of identity, transport, and reputation management. In this dataset, the shared domain performs credibly because it is operationally maintained as such.

SMTP Transport: Reputation at Scale

Even with similar content and audiences, the infrastructure carrying the message creates a meaningful but bounded difference in participation. Transport reputation, throttling behavior, and inbox placement directly affect whether survey invitations surface at all and in which folder. Therefore, we have Google Workspace SMTP leading Mandrill (3.71% vs. 2.99%), with a ~24% difference in participation. The effect is real, but it operates within the structural limits of the inbox.

The quarterly breakdown reveals a deeper pattern: transport quality is not just about average performance, but about stability under pressure. Mandrill shows very high response rates in low-volume quarters, but drops in Q4 when volume and inbox competition peak. Google Workspace SMTP, by contrast, maintains a much narrower and more stable range (roughly 3-4%) across all quarters, even as sending volume increases.

This indicates that deliverability is regime-based rather than linear. Some infrastructures perform well in “calm” conditions but degrade sharply under seasonal load and filtering pressure, while others offer lower peaks but far greater reliability at scale. In practical terms, transport choice becomes a risk-management decision, not just an optimization one: the critical question is not which system can achieve the highest response rate in ideal conditions, but which one preserves visibility and trust when the inbox is most crowded and attention is most scarce.

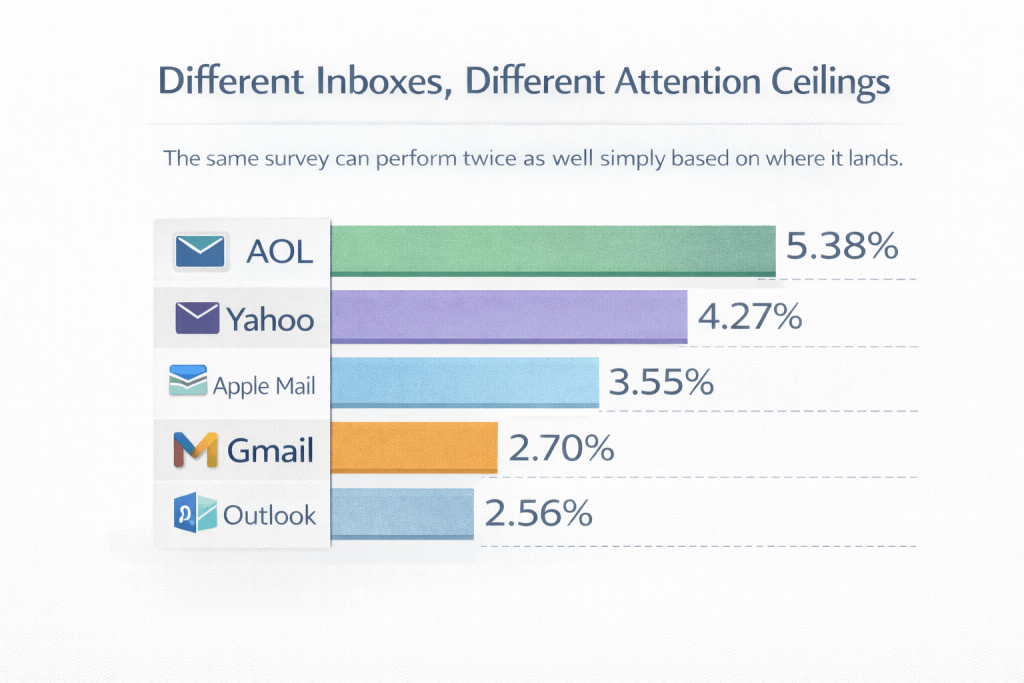

Mailbox Provider Effects: The Attention Environment

But transport is only the first gate. Even after an email is successfully delivered, the mailbox it lands in defines the attention environment.

Different inbox ecosystems have different participation ceilings, reflecting:

- Filtering aggressiveness

- Competition density

- User demographics and engagement habits

The same survey can perform twice as well simply based on where it lands.

Given different attention environments, which categories still break through? This provider effect is not uniform across industries. When response rates are broken down by vertical within each mailbox environment, the same trust and attention patterns repeat, but with very different intensity.

Garden & Pets and Health & Beauty consistently achieve the highest engagement across all major providers. For example, Garden & Pets reaches 9.22% on Gmail, 12.75% on Yahoo, and 14.49% on AOL, while Health & Beauty reaches 7.33% on Gmail, 11.52% on Yahoo, and 13.01% on AOL. These categories retain attention even in more saturated inboxes, confirming that emotional relevance and category involvement amplify the effect of good deliverability.

By contrast, Fashion, Food & Beverage, and Home & Living remain at the bottom across providers, often around 2-3% on Gmail and only moderately higher on Yahoo and AOL. In these high-frequency, low-involvement categories, even strong inbox placement cannot fully compensate for attention fatigue.

AOL stands out as the most responsive environment across nearly all verticals, not because the brands behave differently, but because the inbox itself is less congested and more relationship-oriented (most responsive for niche verticals). Gmail and Outlook, where promotional density is highest, consistently show lower participation ceilings regardless of vertical.

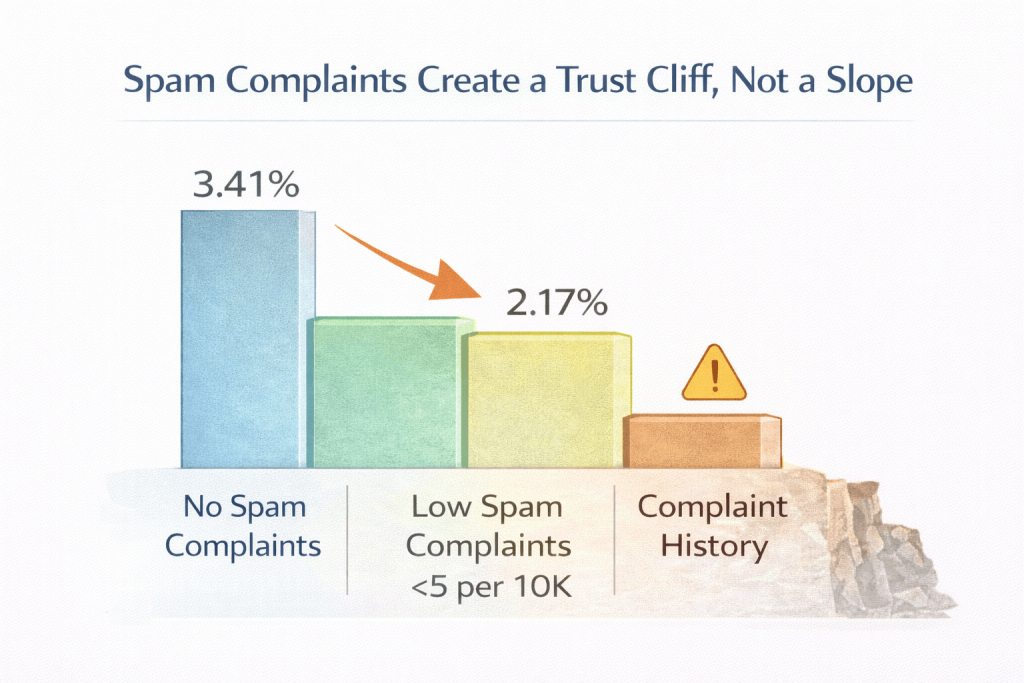

Spam Complaints: The Hidden Participation Tax

Accounts receiving spam complaints experience a 35% lower response rate. This is not only because fewer emails reach the inbox, but because complaint-prone senders enter a reputation spiral:

Lower engagement → worse placement → lower visibility → even lower engagement.

Response suppression becomes cumulative.

Q4 data shows this is a real and lasting effect, not random noise. Accounts with no complaints stayed at 3.41%, while those with any complaint history fell to 2.23%. Even very low complaint levels already push response down to around 2.17%.

This means there is a trust threshold, not a smooth decline. Once inbox providers lose confidence in a sender, every future survey becomes harder to notice, no matter how good the content is.

Because spam complaints rise with heavy sending, volume pressure and reputation damage reinforce each other. With the industry benchmark for healthy senders below 0.1% spam rate, even small issues can cap how much feedback a program can ever collect.

In practice, spam complaints act like a long-term participation tax: once trust is damaged, response rates stay structurally lower for a long time. This makes it necessary to look not at domain or transport in isolation, but at how the two interact as a single trust system.

Domain × Transport Interaction: Infrastructure Synergy

The best-performing setup (Own Domain + Google Workspace) achieves a 3.71% response rate, 27% higher than the weakest configuration (Own Domain + Mandrill at 2.92%).

The second one, Retently Domain + Mandrill (3.65%), performs very close to the top setup. This indicates that both branded sending with Google SMTP and a well-managed platform domain with Mandrill can achieve similarly strong response outcomes. Looking at domain in isolation, the gap between configurations is relatively narrow, suggesting that performance is shaped less by domain label alone and more by how domain and delivery method are combined.

Therefore, this interaction shows that:

- Domain trust and transport reputation reinforce each other

- A strong domain cannot fully compensate for weak transport

- A strong transport cannot fully compensate for a weak sender identity

Participation results from the combined credibility of identity and delivery path.

Interpretation: Response Rate as a Trust Signal, Not Just a Behavioral One

What looks like simple “email performance” is actually a layered trust system:

- Recognition trust: “Do I know this sender?” (domain)

- Placement trust: “Did this reach my main attention space/my main inbox?” (transport + provider)

- Reputation trust: “Is this sender respectful of my inbox?” (complaints, frequency, hygiene)

Only when all three align does the customer even see the question in a state where answering is plausible.

Implications for 2026

Response rate optimization is increasingly tied to infrastructure quality. In 2026:

- Deliverability will act as a hard ceiling on participation.

- Trust cues embedded in the technical setup will shape who even has the opportunity to respond.

- CX teams will need to treat domain strategy, transport choice, and reputation management as part of the listening architecture, not as IT hygiene.

In short, before a customer decides whether to answer, the system has already decided whether the question deserves to be seen.

7. Relationship Depth: Lifecycle as a Response Multiplier

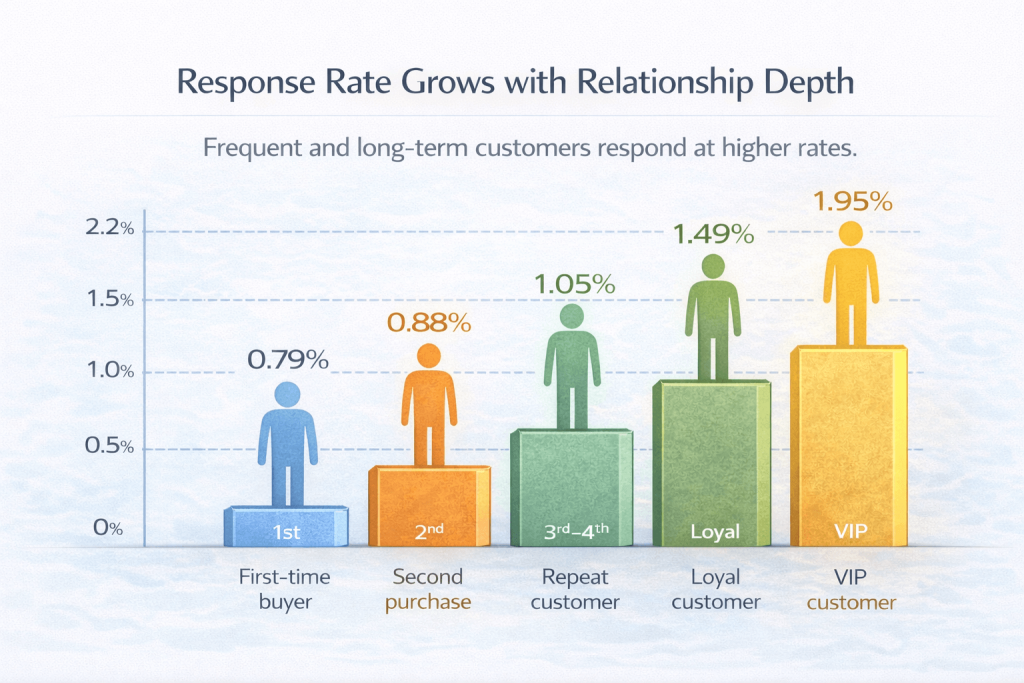

Beyond channels, survey types, and infrastructure, one of the most powerful predictors of response behavior is the depth of the relationship itself. The data shows a clear and consistent pattern: the longer and more frequently a customer interacts with a brand, the more willing they are to give feedback. In practice, response rate compounds with relationship history, not with spend alone.

Response Rate by Order Frequency

Response rates increase almost monotonically with order frequency. Customers who have placed ten or more orders are 2.5 times more likely to respond than first-time buyers (1.95% vs. 0.79%), showing a clear progression in participation as the relationship deepens. In practical terms, the hardest group to engage is always the first-time buyer (0.79%), while loyalty consistently translates into higher survey engagement.

This is not a marginal effect. It represents a structural shift in willingness to engage, where relationship history, not just recent experience, becomes the main driver of who is willing to speak.

Order Frequency vs. Total Spend

Interestingly, the same pattern does not appear when customers are segmented by lifetime value alone. Across spend brackets – from low spenders to high-value customers – the response rate remains relatively flat between 0.92% and 1.17%, with no meaningful upward trend as spending increases. In other words, lifetime value shows no significant correlation with response rate.

A customer who placed one large order behaves, from a feedback perspective, much like any other first-time buyer. In contrast, a customer who placed several small or medium orders shows progressively higher engagement. This confirms that what drives survey participation is not how much money a customer has spent, but how often and how long they have been in a relationship with the brand.

This distinction highlights an important psychological difference:

- Total spend reflects economic value.

- Order frequency reflects relationship continuity and habit formation.

Feedback willingness is driven far more by the latter. Order frequency, not total spending, is the true multiplier of survey engagement.

Trust Accumulation and the Willingness to Speak

Three mechanisms explain why relationship depth acts as a response multiplier:

- Familiarity and recognition: Repeat customers recognize the brand’s communication patterns and are less likely to treat survey invitations as noise or risk.

- Psychological ownership: With repeated interactions, customers begin to feel that their experience “matters” to the brand and that their opinion can influence outcomes.

- Reciprocity and investment: Having already invested time, money, and attention, loyal customers are more inclined to invest a bit more by sharing feedback.

First-time buyers, by contrast, are still in an evaluation mode. Trust is unformed, the relationship is tentative, and the perceived benefit of responding is low. The question “why should I help?” has not yet been answered.

Implications for 2026

This lifecycle effect reframes how response rates should be interpreted and managed:

- Low response from first-time buyers is not a failure of survey design; it is a feature of early-stage trust.

- High response from repeat and VIP customers is not accidental; it reflects accumulated relational capital.

For 2026, effective listening strategies will need to be lifecycle-aware:

- Transactional, low-friction surveys for early-stage customers

- Richer, more reflective questions for mature relationships

- Different frequency caps and timing rules by lifecycle stage

In essence, response rate is not only a function of how and when we ask, but also how long the customer has known us. Trust compounds, and so does the willingness to speak.

What All These Effects Have in Common

Across channels, metrics, infrastructure, and lifecycle stages, the same underlying pattern repeats: customers do not decide to answer based on the survey alone, but based on the state they are in when the question reaches them.

Response behavior is shaped by four conditions that operate together:

- Is attention available? Not in general, but in this moment, in this environment.

- Is the mental effort justified? Not in absolute terms, but relative to what the customer is currently doing.

- Is the request credible and safe? Not in theory, but in the instant recognition of the sender and the context.

- Does the relationship make the effort feel worthwhile? Not because of spend, but because of continuity and future relevance.

What changes across email vs in-app, NPS vs CSAT, own vs shared domain, or first-time vs loyal customers is not the logic of decision-making, but the values of these four variables.

The same behavioral system is at work in all cases. The surface differences are expressions of how attention, effort, trust, and commitment are distributed in each context.

Cross-Validation with External Benchmarks

Our 2025 dataset already explains what happened (and why) inside real ecommerce survey programs at scale. This section is simply the “sanity check” layer: do external benchmarks point in the same direction and where does Retently data tell a more specific story than public averages can?

1. Email Engagement Pressure

Retail click and conversion-to-click decline (GDMA, Zeta) → what that means for survey invite viability

Even if survey questions, timing, and incentives stayed the same, the email environment got tougher. Two benchmarks show retail engagement pressure showing up specifically in click behavior (the same action a survey invite depends on).

- GDMA International Email Benchmark 2025 reports retail campaigns have seen a ~2.8 pp decline in click-to-open (now ~6.3%) and a ~1.4 pp decline in CTR (now ~2.3%) since 2022.

- Zeta Q2 2025 Email Benchmark reports click-to-open rates declining across most verticals, and for retail specifically, conversion-to-click slipped from 15.6% to 14.6%.

Why this matters for surveys: email survey programs are “click asks.” If retail click behavior is under pressure, survey response ceilings via email get lower unless you win on relevance, deliverability, or context (in-app).

This aligns cleanly with our data: email response averages ~3.24%, while in-app is ~32.34% (10× higher), suggesting the main limiter isn’t “people hate giving feedback,” but the weakening ability of the inbox to convert attention into action on its own.

2. The “Silent Customer” Trend

Qualtrics/XM Institute evidence of declining feedback propensity since 2021

Our dataset shows declining elasticity under load (Q4 saturation) and low baseline email response. External CX research adds a broader behavioral backdrop: consumers are increasingly likely to stay silent even when they have an experience worth talking about.

- The 2025 Consumer Experience Trends note that compared to 2021, given the AI hype, consumers are 7 points less likely to say something after a good experience and 8 points less likely after a bad one.

- The 2025 “How Consumers Share Feedback” study is more specific and reports less direct feedback to companies vs 2021 (e.g., after a very good experience, direct feedback to the company down 6.5 points; after a very poor experience, down 7.7 points).

How it fits our story: it supports the idea that response rate is no longer a “sampling optimization problem.” It’s increasingly an attention + trust + perceived usefulness problem, consistent with our mechanisms section (attention economics, trust signaling, cognitive friction).

3. Inbox Governance Tightening

Google, Yahoo, Microsoft bulk sender requirements → why infrastructure now shapes response rates

Retently’s deliverability findings (domain, SMTP transport, provider effects, spam-complaint correlation) look even more “inevitable” once you overlay what inbox providers started enforcing.

- Google: if you send 5,000+ messages/day, marketing and subscribed messages must support one-click unsubscribe (plus broader sender guidelines).

- Yahoo: best practices include keeping spam rate below 0.3% and implementing list-unsubscribe / one-click unsubscribe support.

- Microsoft (Outlook.com): for domains sending over 5,000 emails/day, Outlook requires SPF, DKIM, and DMARC; non-compliant messages may be routed to Junk and eventually rejected.

Why this matters for surveys: as inbox rules tighten, trust infrastructure becomes participation infrastructure. That matches our internal results:

- Retently’s shared domain slightly outperforms own domain (3.66% vs 3.12%), suggesting that domain label alone is not the dominant driver, the interaction with transport and reputation matters more.

- Google Workspace SMTP outperforms Mandrill, particularly under seasonal pressure.

- Spam complaints correlate with response suppression, indicating that once reputation deteriorates, visibility declines and participation follows.

How Retently Is Strengthening Deliverability in 2026

The 2025 data makes one constraint clear: infrastructure shapes participation. As inbox rules tighten and spam thresholds narrow, response rates are increasingly capped by sender reputation and placement stability. Retently is addressing this proactively. Some of these improvements are already live, others are actively being rolled out.

Protecting Sender Reputation

When a recipient marks a survey as spam, Retently automatically suppresses that address across the platform, preventing repeated exposure that would accelerate domain penalties. Hard bounces are removed immediately, and disposable or masked emails are filtered out before they distort engagement data or weaken sender credibility.

Domain health is continuously monitored. If reputation dips, sending can be throttled or paused automatically. New domains are gradually warmed up to prevent early high-volume shocks that permanently limit inbox placement. The focus is simple: stop reputation decay before it compounds.

Making Deliverability Risk Visible

Retently surfaces unsubscribe trends, spam complaint rates, bounce behavior, and campaign-level anomalies in real time. Campaigns and segments can be compared to identify which survey types generate disproportionate friction. Unsubscribe reasons are categorized so brands can adjust frequency and targeting early.

Provider-level analytics (Gmail, Outlook, Yahoo) expose inbox-specific placement differences, making structural constraints visible and actionable.

Aligning Identity and Transport

Retently supports verified own-domain sending with simplified DNS setup – a single authentication record instead of the typical multi-record setup – improving inbox placement and brand trust. Smart routing selects the most stable delivery path based on reputation, volume, and provider behavior. Authentication reliability is monitored to prevent silent failures caused by expired credentials or provider limits.

Giving Brands Control

Retently provides self-service suppression controls, allowing brands to manage their own exclusion lists – blocking internal addresses, competitor domains, or other high-risk segments – and to view globally suppressed contacts in one place.

Customers sending surveys through their own Google account get proactive alerts when token issues arise, daily send limit warnings, and automatic error recovery, instead of emails silently failing.

This ensures that sender discipline is enforced at the infrastructure level and actively managed at the account level as well.

Reducing Inbox Dependence

Q4 showed that inbox attention is affected under seasonal pressure. Retently reduces single-channel fragility by enabling omnichannel survey delivery. If email goes unopened, follow-up can occur in-app or post-checkout. Reminder sequences can span channels rather than repeating inbox-dependent asks. Deliverability is no longer just placement. It is preserving visibility across attention environments.

Retently acknowledges that deliverability pressure is structural, not temporary. The response reflects that reality: suppress negative signals early, monitor domain health continuously, surface friction before it compounds, align identity and transport discipline, and reduce dependence on a single attention channel. Deliverability is participation infrastructure.

2026 Outlook: Structural Trends, Not Tactical Fluctuations

If 2025 taught us anything, it’s that response rate isn’t something you “optimize” with a few tweaks. It’s increasingly shaped by structural factors: where attention lives, how trust is signaled, and whether the ask feels worth the effort in the moment.

So instead of predicting small up-and-down changes, this outlook focuses on the bigger shifts that will define response rates in 2026.

In-flow vs inbox trajectories

In 2026, survey programs are going to split into two paths:

1) In-flow feedback will keep getting stronger

In-app and in-experience prompts benefit from a structural advantage: they appear inside the customer’s current context, when attention is already allocated and the experience is still fresh. Retently 2025 data already shows this clearly: in-flow response rates operate in an entirely different range than email.

The likely 2026 trajectory:

- In-flow will become the default “high-signal” listening layer

- Brands will expand it because it scales even during peak periods

2) Inbox-based feedback will keep getting tougher

Email will remain essential for reach and for relationship-level measurement, but the inbox is becoming a more competitive and more regulated space. With declining click behavior, stricter sender requirements, and growing fatigue, email response rates are likely to stay capped for high-volume senders.

The likely 2026 trajectory:

- Improvements will come mostly from infrastructure, segmentation, better timing, and trust signals, not from volume or copy alone

- Email becomes a targeted, selective channel, not a mass listening engine

Response Rate as a Trust Metric

From “collection efficiency” to “relationship health proxy”

Traditionally, teams treated response rate like a productivity metric: “How efficiently can we collect enough answers?”. In 2026, response rate increasingly behaves like something deeper: a proxy for relationship health.

Because responding requires:

- trust in the sender

- belief that the feedback will matter

- willingness to invest cognitive effort

- tolerance for being asked again

When response rates fall, it’s not always a “survey problem.” It can be a signal that:

- the brand is over-communicating

- the ask feels generic or automated

- the customer doesn’t feel recognized

- the relationship hasn’t matured enough to justify the effort

- inbox placement and reputation are quietly affecting trust

This is why our deliverability findings matter so much: domain, transport, complaint rates, and mailbox differences aren’t “technical details.” They shape whether customers perceive your feedback request as legitimate and worth attention.

So in 2026, the practical shift is this:

- Stop treating response rate as a KPI you chase.

- Start treating it as a signal you interpret.

A stable response rate in a noisy environment often reflects strong trust. A declining one often shows you where trust is breaking before churn or revenue metrics catch up.

From Mass Sampling to Precision Listening

Lifecycle-aware, moment-aware, volume-controlled programs

The biggest strategic shift in 2026 is moving from “ask everyone” to “ask the right people at the right moment.” Retently’s 2025 findings point to three design principles that will define high-performing programs next:

1) Lifecycle-aware listening

First-time buyers behave differently than repeat customers. VIPs respond differently than casual purchasers. Treating them as one pool guarantees wasted volume and distorted samples.

2026 programs will:

- ask less of first-time buyers (or ask differently)

- lean into repeat and loyal customers for richer signal

- reserve relationship-level questions for the segments most willing to answer them

2) Moment-aware listening

The “right” question depends on what just happened. Specificity reduces cognitive friction and raises participation.

2026 programs will:

- trigger feedback based on events (delivery, support resolution, onboarding completion)

- align the question type with the experience type

- stop sending abstract relationship questions on a fixed schedule to everyone

3) Volume-controlled listening

The Q4 collapse is the warning label: volume can break participation.

2026 programs will:

- cap frequency per customer

- apply suppression rules (especially during peak seasons)

- optimize for representativeness, not maximum sends

This is what “precision listening” really means.

The Big Takeaway for 2026

Response rates won’t be won by clever wording alone. The programs that perform best in 2026 will be the ones that redesign where feedback happens, who gets asked, and how often.

Because in the new reality, getting a response is about asking smarter inside the moments and relationships where customers still feel it’s worth answering.

What This Data Changes in How You Should Think About Response Rates in 2026

Let’s start with the uncomfortable truth your data makes very clear:

1. Email has a ceiling. And no amount of “optimization” can push past it.

Even with the best possible setup – own domain + Google SMTP – you’re still topping out at around 3.7%. Meanwhile, in-app surveys live in a completely different world, often 10× higher. So when email struggles, it’s not because your subject line isn’t clever enough. It’s because the inbox itself has become a tough place to ask for attention.

2. Some things don’t slowly decline. They suddenly break.

Q4 isn’t a gentle slope. It’s a cliff. Mandrill performs well when things are calm, then collapses when volume and competition explode. Spam complaints show the same pattern: once trust thresholds are crossed, response doesn’t fade – it drops. Response rate works in “regimes,” not smooth curves. You’re either in a stable zone… or you’re not.

3. Infrastructure matters more than most CX teams like to admit.

Infrastructure matters and inside the inbox, it defines the performance ceiling under which all other optimizations operate. Sender identity and deliverability aren’t background plumbing. They’re front-row drivers of whether people even consider clicking.

4. Consistency beats peak performance.

Mandrill can hit high numbers in easy quarters. Google SMTP never spikes that high, but it also doesn’t collapse in Q4. That makes transport choice a risk decision, not just a performance one. The real question isn’t “Who wins on a good day?” It’s “Who still shows up when inboxes are crowded and filters get strict?”

5. Personalization is not magic. It’s situational.

Your vertical data is blunt about this. In Gifts & Beauty, personalization lifts response strongly. In Food & Home, it actually hurts. Same tactic, opposite outcome. So “personalize everything” isn’t a safe best practice; it’s a bet that only pays off in the right emotional context.

6. Loyalty beats money when it comes to getting people to talk.

Customers with 10+ orders respond 2.5× more than first-time buyers. But lifetime spend barely changes response at all. One big order doesn’t create willingness. Repeated interaction does. Trust and habit matter more than revenue.

7. More volume can actually destroy your signal.

Your Q4 data shows it clearly: past a certain point, sending more surveys doesn’t give you more insight. It gives you less. Attention saturates, irritation grows, and response elasticity collapses. Frequency and seasonality aren’t tactical details, they decide whether your listening system stays healthy or starts eating itself.

8. CES and CSAT are easier on the brain.

Their higher response rates don’t mean customers “care less” about brands than tasks. They mean it’s simply easier to answer “Was this easy?” or “Was this good?” than “Would I recommend you to a friend?” NPS asks for synthesis and identity. CES and CSAT ask for recognition. Lower effort = higher participation.

Spam complaints and weaker-performing configurations correlate with suppressed response, even when emails are technically delivered. In our data, participation drops first. That makes response rate an early warning system for relationship and reputation decay.

10. Every environment has its own response ceiling.

AOL and Yahoo allow higher participation than Gmail and Outlook. In-app operates in a totally different attention economy. Each environment sets a hard upper limit on what’s possible. Optimization can move you within that limit, but it can’t break it.

Three things must line up before anyone answers:

- The message must be seen (domain, transport, provider, complaints).

- The question must feel easy and relevant (survey type, timing, context).

- The relationship must make the effort feel worth it (lifecycle, category, emotional involvement).

Miss any one of these, and response drops, no matter how good the other two are.

11. And finally: not all “high response” means the same thing.

In-app and email sample broad audiences under attention limits. Link and QR surveys capture highly motivated minorities with near-perfect completion. That’s not better response, it’s different selection. Completion reflects intent, not representativeness.

12. Category emotion changes everything.

Garden & Pets and Health & Beauty cut through even in crowded inboxes. Fashion, Food, and Home stay weak even when placement is good. Emotional relevance doesn’t just lift numbers, it reshapes the whole response curve.

Put simply:

In 2026, response rate isn’t something you squeeze out with clever wording. It’s what happens when attention, trust, effort, and relationship depth all happen to line up at the same moment.

Conclusion: Redefining What “Good Response Rate” Means in 2026

If there’s one big takeaway from all this, it’s that response rate is no longer just a “number to optimize.” In 2026, it’s more like a health signal of the whole relationship between a brand and its customers.

People don’t ignore surveys because they suddenly hate giving feedback. They ignore them because the moment is wrong, the context is off, the trust isn’t there, the question feels heavy, or the message never really had a fair chance to be seen in the first place.

Think about what has to line up for someone to actually stop and answer:

- They need to be in the right context – ideally still inside the experience, not buried in an overloaded inbox hours later.

- They need to trust the sender – recognize the brand, feel safe, feel that this isn’t just another automated blast.

- The question has to feel relevant – connected to what just happened, not a generic ritual.

- The mental effort has to be low enough – reacting is easy, reflecting deeply is harder.

- And underneath it all, the infrastructure has to work – good deliverability, clean reputation, respectful frequency.

When those pieces come together, people answer almost naturally. When they don’t, no amount of copy tweaking or incentive testing can fully compensate.

So the mindset shift for 2026 is a simple but powerful one.

It’s not: “How do we squeeze more answers out of the same audience?”

It’s: “How do we create the right moment, with the right trust, for the right person, so answering actually makes sense?”

A “good” response rate in 2026 won’t be a universal benchmark or a magic percentage. It will look different by channel, by vertical, by lifecycle stage, by season. What will make it “good” is that it comes from:

- being present in the flow of experience

- being recognizable and trustworthy

- being timely and emotionally aligned

- being easy to answer

- and not asking more than the relationship can reasonably carry

In that sense, response rate is no longer something you simply optimize, it’s something you earn.

Christina Sol

Christina Sol

Alex Bitca

Alex Bitca